What Is Software Performance Testing and Why It Matters

Software performance testing evaluates how an application behaves under expected and peak usage conditions. It focuses on how quickly the system responds, how it handles concurrent users, and whether it remains stable as demand increases. The goal is to identify performance limits and failure points before they affect users, rather than reacting to issues after release.

Performance issues have direct, measurable consequences. Akamai reports that a 100-millisecond increase in page load time can reduce conversion rates by up to 7%, underscoring how small delays affect real usage.

Software performance testing exists to prevent these outcomes. It helps teams understand how their systems behave under load, what conditions lead to degradation, and whether performance expectations are being met before the software is exposed to real users.

Why Software Performance Testing Is Required

Performance testing matters because most systems fail in ways that are not visible during development. Test environments limit concurrency, follow predictable execution paths, and use simplified data. Production removes those constraints.

User activity overlaps, shared resources compete, and external dependencies introduce latency that functional testing cannot reveal, issues that only surface under load testing through realistic traffic at scale.

As usage and complexity grow, teams need to understand how much load the system can tolerate, where it slows down, and how close it operates to failure under normal conditions. Performance testing exists to expose these limits before failures surface in real usage.

Why Teams Depend on Performance Testing

- Capacity boundaries: Performance testing reveals how much concurrent activity a system can support before response times degrade or failures occur, a limit typically measured through load testing platforms.

- Pressure points in the system: Areas of pressure within the system. Inefficiencies are exposed when under pressure. During performance testing, one could notice slow database queries, slow code path, contention with shared services, and constraints of infrastructure not seen during functional testing.

- Operational readiness: Understanding how the system would survive the known events causes those events to be known stresses, rather than finding out the weaknesses of the system during those events.

- Cost exposure: Performance issues found late tend to trigger rushed fixes, rollbacks, and extended recovery efforts. Identifying performance limits earlier keeps remediation planned and controlled.

- Reliability expectations: Consistent performance shapes how users perceive reliability. When delays or failures become frequent, user behavior changes quickly and is difficult to reverse.

Performance testing replaces assumptions with observable behavior. It provides a clear view of how systems respond as demand increases and where performance begins to break down.

Types of Performance Testing

Different performance tests exist because performance risk shows up in different ways. From a decision standpoint, running the wrong type of test can create false confidence. Systems appear stable in test environments but fail when exposed to real usage. Each test type is designed to reduce a specific category of risk, and understanding that distinction is critical when planning releases, scaling usage, or approving infrastructure changes.

Each of the following test types focuses on a different performance risk that teams need to evaluate separately.

1. Load Testing

Load testing is done to ascertain whether an application can support expected user traffic without being affected by performance degradation. Load testing is used by decision-makers to confirm present performance is in line with the projected demand, e.g., a planned release, onboarding new customers, or scaling up usage in an existing user base, to balance traffic between cloud infrastructure to diminish the threat of overload in the face of normal operating conditions.

2. Stress Testing

Stress testing evaluates how the system behaves when demand exceeds planned limits. From a leadership perspective, this test is less about preventing failure and more about understanding it. Stress testing reveals how the system fails, how quickly it degrades, and whether it recovers cleanly. This insight is critical for risk planning, incident readiness, and setting realistic service expectations.

3. Scalability Testing

Scalability testing examines how performance changes as demand increases incrementally, helping leaders evaluate scaling independent services as demand grows and whether added capacity produces predictable performance gains.

4. Endurance Testing

Endurance testing is used to test if the performance is consistent under long periods of continuous use, to minimize the chance of slow degradation by observing containerized applications over time to detect memory leakage, resource exhaustion, or connection buildup that would not be evident in brief tests.

5. Spike Testing

Spike testing involves the measurement of the reactions of systems to sudden bursts in the traffic. Decision-wise, this test is significant when the usage trend cannot be predicted, like the case of a promotion, acquisition, or unscheduled circumstances. Spike testing will minimize the chances of sudden breakdowns due to the sudden increase in traffic that is beyond the normal operation limits.

6. Volume Testing

Volume testing focuses on the performance impact caused by data growth rather than user traffic. The volume testing is normally accepted by the decision-makers when it is likely that the database, logs, or transactional records are going to increase substantially.

It can be used to estimate how the performance will decline with time as data is gathered in the system and the probability of slow querying, long processing times, or scale-related operational bottlenecks.

Software Performance Testing Metrics

In performance testing, measures are not gathered to report singly. These are in place to reveal the acceptable performance, performance that degrades, and risk starting points. These metrics are used to evaluate the behavior of the system at load and to detect where the limit is being reached.

Response Time: The metric is used to measure the time taken by the system to process a request. Teams do not just look at averages, but high-percentile values (like p95 or p99), at which delays initially become observable to the user. An increase in tail latency is usually a manifestation that the system is approaching its threshold.

Throughput: The throughput shows the number of requests or transactions which can be supported by the system. A level off in throughput with increase in load is an indication of an upper limit. The metric assists teams to know if the existing infrastructure can sustain the anticipated demand.

Resource utilization: Resource utilization is used to measure the behavior of system resources when they are under load, that is, CPU, memory, disk I/O, and network utilization. Resource saturation is usually followed by performance degradation. Seeing the transformation of these resources with an increase in the load assists the teams in knowing where the bottlenecks are and whether the limits are code-related or infrastructure-related.

Error Rate: The error rate follows the ratio of requests that fail in the course of a test. An increase in the rate of error is a good sign that the system is working well past the stability threshold. Error rates give a straightforward go/no-go at pre-release assessment.

Concurrency: This term is used to define the number of activities that are being performed simultaneously. Concurrency has been used in the context of other measures as opposed to being an isolated measure of success. A response time or error rate can mean very different things when the number of concurrent users or processes is changed.

Comparative trends: Performance testing is most useful when results are compared across runs. Trends reveal regressions, improvements, or instability introduced by changes in code, configuration, or infrastructure. Single test results provide a snapshot; trends establish confidence.

How to Structure Software Performance Testing

The failure in performance testing usually occurs after results have been generated, and not due to the inaccuracy of the data, but due to the fact that the expectations were not predefined. When the criteria of success are not definite, there are different interpretations of the same results within the team,s and performance testing does not give a definite direction.

For performance testing to influence outcomes, teams must establish a shared structure before tests are run. The following elements determine whether results lead to alignment or disagreement.

Decision intent

Every performance test should exist to support a specific decision. This may include validating readiness for expected usage, assessing risk after system changes, or confirming that performance has not regressed. Tests without a clear decision anchor tend to generate data that is reviewed but not acted upon.

Defined scope

Tests should explicitly define the user flows, APIs, or background processes that they cover, and as importantly, what they do not cover. General testing tends to give less interpretable results, and a narrow, purposeful scope tends to put the performance boundaries in a position that is easier to assess.

Representative workload

Load and concurrency should reflect how the system is actually used. Overlapping requests, background jobs, and uneven traffic patterns matter more than evenly distributed load. Simplified workloads often hide the conditions under which performance issues emerge.

Production-like data

Data size and structure should closely resemble real usage. Minimal or artificial datasets frequently mask issues related to query execution, indexing behavior, and long-running operations that only appear at a realistic scale.

Shared observation signals

The teams must prioritise the agreed metrics and system indicators to be used to interpret results. The absence of common signals makes the conclusions subjective even within the review of the same data.

When these elements are in place, performance testing moves beyond reporting metrics and becomes a reliable input to evaluation, even when execution requires support from offshore development partners. Results are easier to interpret, easier to compare, and harder to dismiss when they reveal real risk.

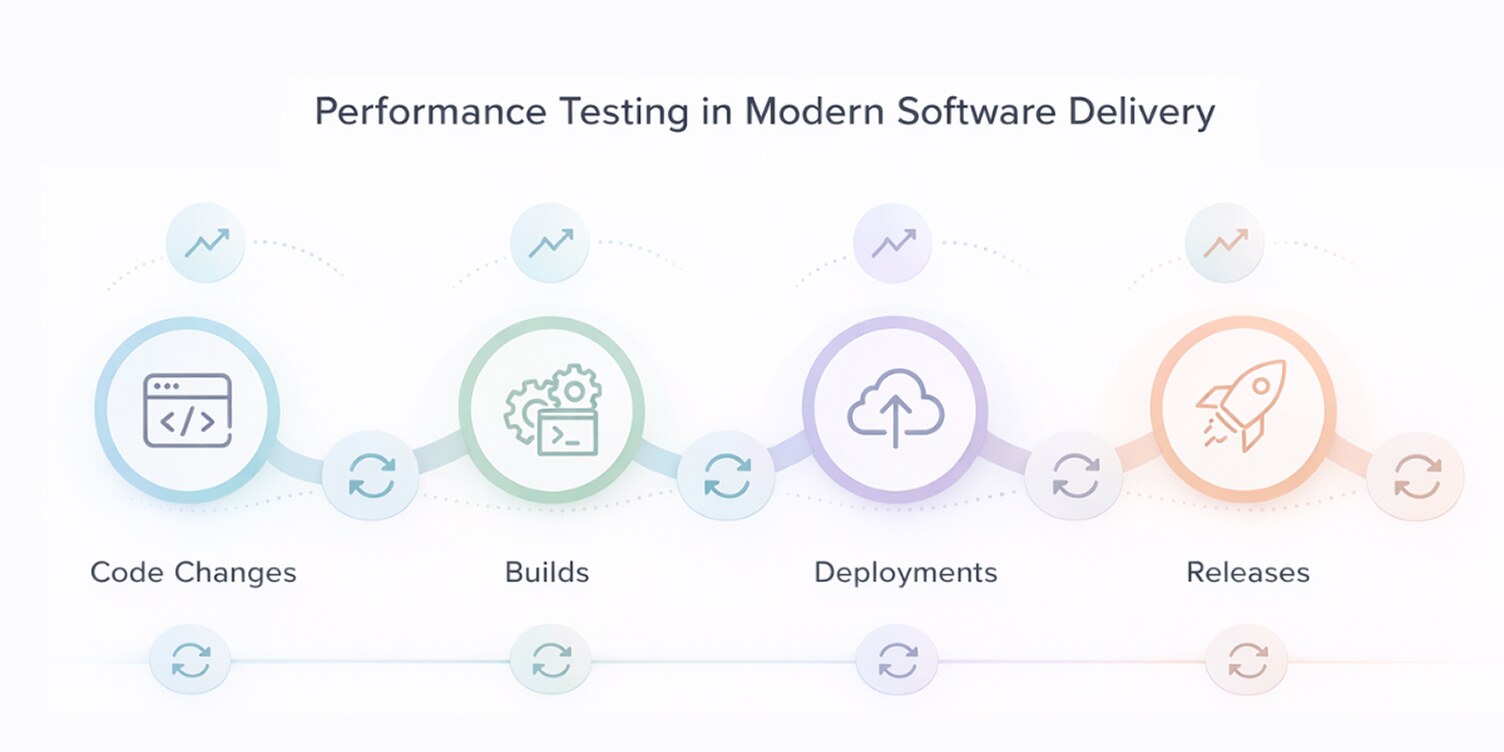

Performance Testing in Modern Software Development

Performance testing was traditionally treated as a milestone activity, executed at specific points before release. That approach assumes system behavior remains stable between tests. In modern software delivery, this assumption breaks quickly. Code changes frequently, dependencies evolve independently, and usage patterns shift without clear release boundaries.

To remain effective, performance testing must adapt to this reality. The emphasis moves away from isolated test runs toward practices that preserve relevance as systems change.

Repeatability

Performance results are only meaningful when the same tests can be rerun under comparable conditions. Repeatability allows teams to verify whether recent changes altered system behavior, rather than relying on isolated snapshots that cannot be compared.

Trend awareness

Single test outcomes provide limited insight. Viewing performance across multiple runs highlights gradual degradation, unexpected improvements, or instability introduced over time. Trends help teams distinguish normal variation from genuine performance risk.

Early signals

Introducing lightweight performance checks earlier in development helps surface inefficient code paths and excessive resource usage before they become deeply embedded. These early signals reduce late-stage risk without replacing deeper validation.

Automation as an enabler

Automation makes repeatability practical. It preserves known performance tests and enables consistent execution as systems change. Its value lies in maintaining comparability and exposing regressions, not in replacing analysis or judgment.

Judgment-driven testing

Not all performance testing can be automated or continuous. Large data scenarios, complex workflows, and edge-case investigations still require deliberate setup and expert review. Performance testing remains a judgment-driven practice, even in highly automated environments.

Performance Testing vs. Real-Time Monitoring

Performance testing and monitoring are often confused because both deal with system behavior under load. They serve different purposes at different stages and are complementary rather than interchangeable.

Conclusion

Software performance testing is not about collecting metrics or running isolated tests. Its value lies in exposing limits, validating assumptions, and reducing uncertainty before performance issues surface in production. When applied deliberately, it gives teams a clear understanding of how systems behave under pressure and where risk begins.

Effective performance testing depends on choosing the right test types, tracking the right metrics, and structuring tests so results are interpretable and comparable. As software changes continuously, testing practices must also adapt to remain relevant. Used alongside monitoring, not replaced by it, performance testing becomes a practical tool for maintaining reliability as systems grow and evolve.