Best API Security Practices to Implement in 2026

Every data fetching application demands extensive use of APIs to store, manage, and trigger information transfer.

APIs act like a junction to connect services, enable integrations, and allow businesses to build faster by reusing functionality instead of creating everything from scratch.

Over time, their usage and capability have grown from simple data exchange to becoming a core part of how software systems operate.

But the growing usage also comes with a cost. Every API endpoint exposed to the internet increases the number of ways an attacker can try to gain access.

These days, organizations oversee hundreds or even thousands of APIs in various contexts. It becomes challenging to monitor what is exposed, who can access it, and how it is being used in the absence of adequate oversight.

Attackers can quickly take advantage of these vulnerabilities.

Security teams can't think of APIs as just another layer behind their usual defenses anymore. When an attack happens through valid API calls, firewalls and network-level protections aren't enough.

Protecting APIs in 2026 requires you to strategically address the basics of how they are designed, deployed, and consumed.

Why API Security Is a Critical Priority in 2026

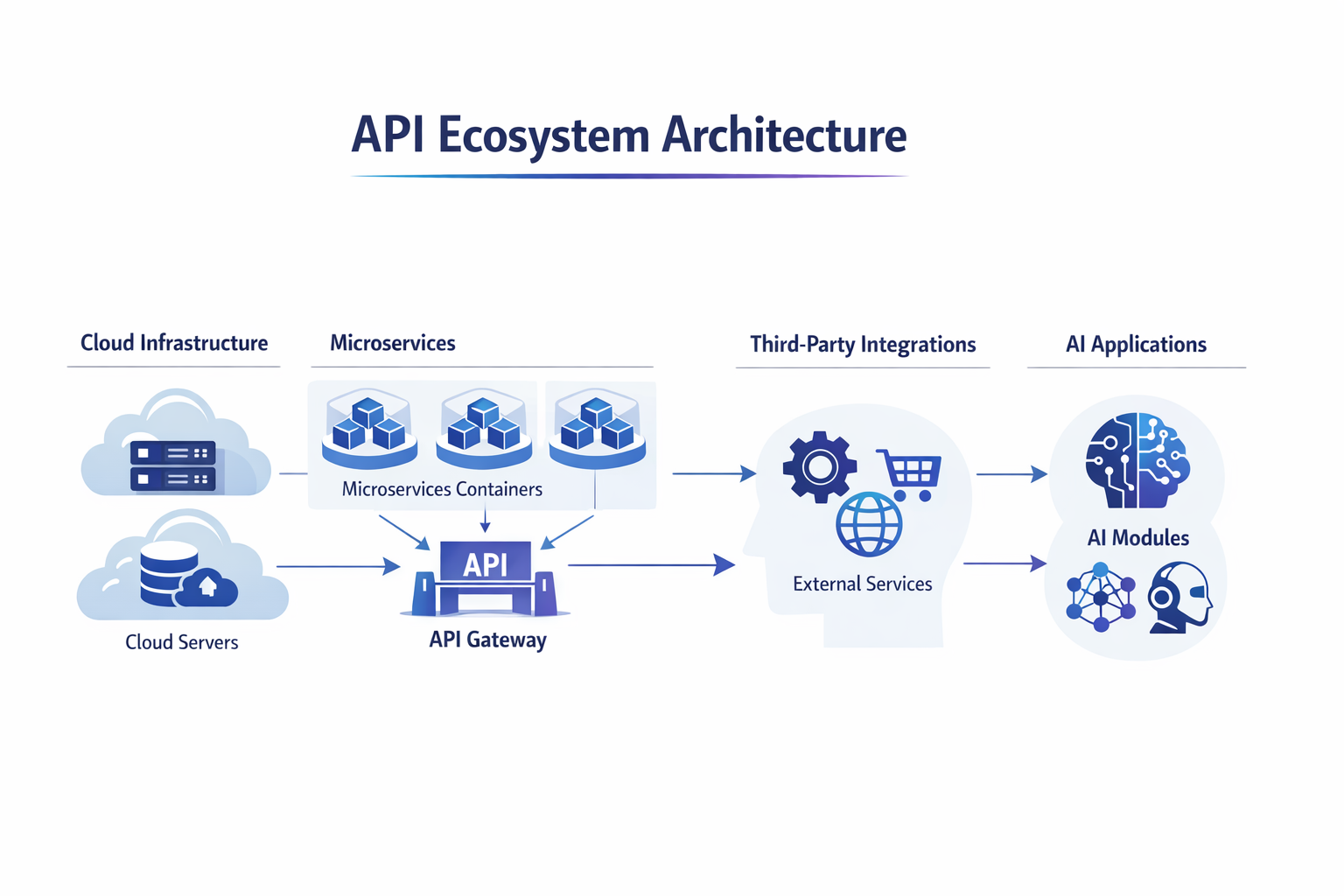

APIs now power most modern applications, forming the backbone of microservice architectures and enabling communication between services, platforms, and users.

From mobile apps to enterprise systems, APIs handle critical business logic and sensitive data exchanges.

This widespread adoption has made them essential, but it has also significantly increased exposure to potential threats.

Every new endpoint introduces another path that can be targeted, making APIs one of the most attractive entry points for attackers.

As API usage continues to grow, the associated risks have become more serious and harder to detect. Threats such as data breaches, credential abuse, and unauthorized access often occur through seemingly valid API requests rather than obvious attacks.

At the same time, changes in technology, such as cloud-native environments, distributed microservices, and increased reliance on external integrations, have made securing APIs more complex than before.

API security is no longer limited to protecting a single layer; it now requires visibility and control across an entire ecosystem.

Key Factors Driving the Need for Strong API Security

Cloud-Native Architectures

Applications are now deployed across multiple environments and regions with an aim to implement and maintain a scalable backend infrastructure. This removes traditional boundaries and requires every API request to be validated regardless of its origin.

Services often run on shared infrastructure, which increases the importance of strict isolation and access control.

Misconfigurations in cloud settings can expose APIs unintentionally, making continuous monitoring essential. This becomes even more critical when designing fallback strategies and failover systems across regions, especially in distributed setups where downtime impacts multiple services.

Microservices Communication

Services constantly interact with each other through APIs. A vulnerability in one service can impact others, increasing the overall risk across the system.

Internal APIs are often assumed to be safe, but attackers can exploit them once inside the network.

Each service-to-service call must be authenticated and authorized to prevent lateral movement. Proper segmentation and verification help limit the spread of potential breaches.

This is very important in architectures where upstream and downstream services rely on chained requests, increasing the impact of a single compromised service.

Third-Party Integrations

External services are deeply integrated into modern applications. These connections extend trust beyond the organization and must be carefully controlled and monitored.

APIs often share data with payment providers, analytics tools, and messaging platforms, which increases dependency on external security practices. Limited access permissions and strict validation help reduce exposure.

Regular audits of integrations are necessary to ensure they do not introduce hidden risks.

AI-Driven Applications

APIs play a key role in data exchange for AI systems. Improper handling of inputs or outputs can lead to data exposure or unintended system behavior.

Attackers may attempt to manipulate API inputs to influence how AI models respond or process information.

Sensitive data used by AI systems must be carefully protected during transmission. Clear validation rules and controlled access help prevent misuse of AI-related APIs.

Strong Authentication Mechanisms

Authentication is the first line of defense for any API. Without reliable methods to verify who is making a request, attackers can easily impersonate legitimate users and gain access to sensitive data.

Strong authentication ensures that only trusted clients and users interact with your endpoints, reducing the risk of unauthorized access.

A well‑designed authentication strategy should balance security with usability. It should prevent unauthorized access while allowing legitimate users to connect without friction.

The choice of method depends on the sensitivity of the API, the type of data being exchanged, and the environment in which the API operates.

Below is a comparison of commonly used authentication methods and how they differ in practice:

Choosing the right combination depends on understanding where the risks lie. APIs that expose sensitive user data or perform high-impact operations should always use stronger verification methods, while simpler services may rely on controlled API key usage with strict monitoring and rotation policies.

Implement Proper Authorization Controls

Authentication verifies who is accessing an API, but authorization determines what they are allowed to do once inside. Without strong authorization, even authenticated users can overstep boundaries and access data or functions they should never touch. This makes authorization one of the most critical safeguards in API security.

Effective authorization ensures that every request is validated against the user’s permissions. It prevents scenarios where a customer could view another customer’s records or where an employee could manipulate data outside their role.

In practice, authorization must be enforced consistently across all endpoints, not just at login.

Here are the key approaches to implementing authorization:

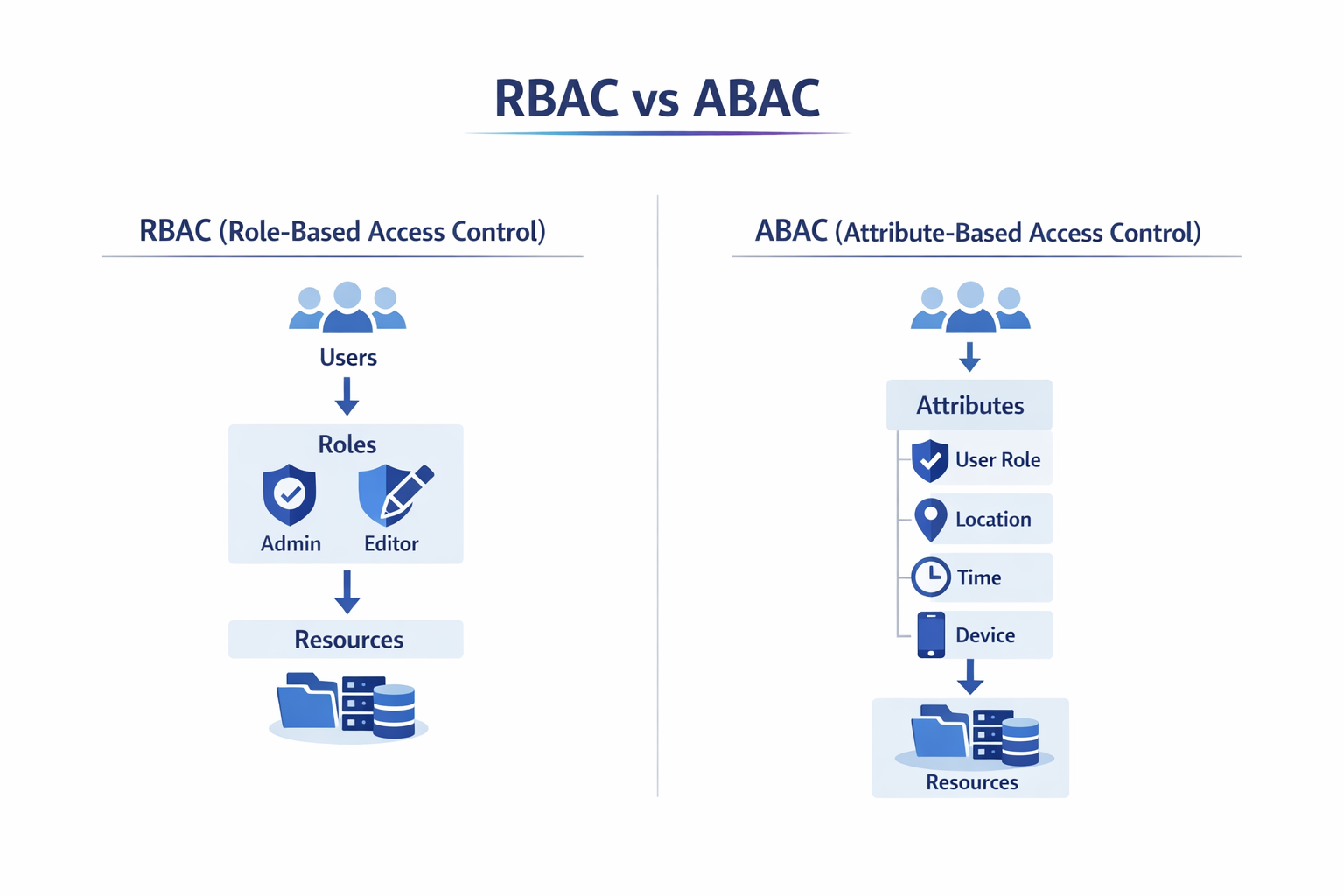

Role‑Based Access Control (RBAC)

RBAC assigns permissions based on predefined roles such as “admin,” “editor,” or “viewer.” This makes it easier to manage access in systems where user responsibilities are clearly defined.

It reduces complexity by grouping permissions instead of assigning them individually.

However, it can become restrictive when users need access that doesn’t fit neatly into a single role.

Attribute‑Based Access Control (ABAC)

ABAC evaluates multiple attributes such as user role, department, location, or device type before granting access.

This allows for more precise control over who can access specific resources under certain conditions.

It is useful in environments where access decisions depend on context rather than fixed roles.

The trade-off is that it requires careful planning and more complex policy management.

Token‑Based Authorization

Token-based authorization uses tokens issued during authentication that contain permission details, often referred to as claims. Each API request includes the token, which is validated before access is granted.

This approach works well for distributed systems where services need to verify permissions independently. Proper token handling, including expiration and validation, is essential to prevent misuse.

Object‑Level Authorization Checks

Object-level authorization ensures that users can only access specific records or resources they are permitted to view or modify. For example, a user should only be able to retrieve their own account details, not someone else’s.

This type of check is critical for preventing data leaks at the individual record level. It must be enforced consistently across all endpoints that interact with user-specific data.

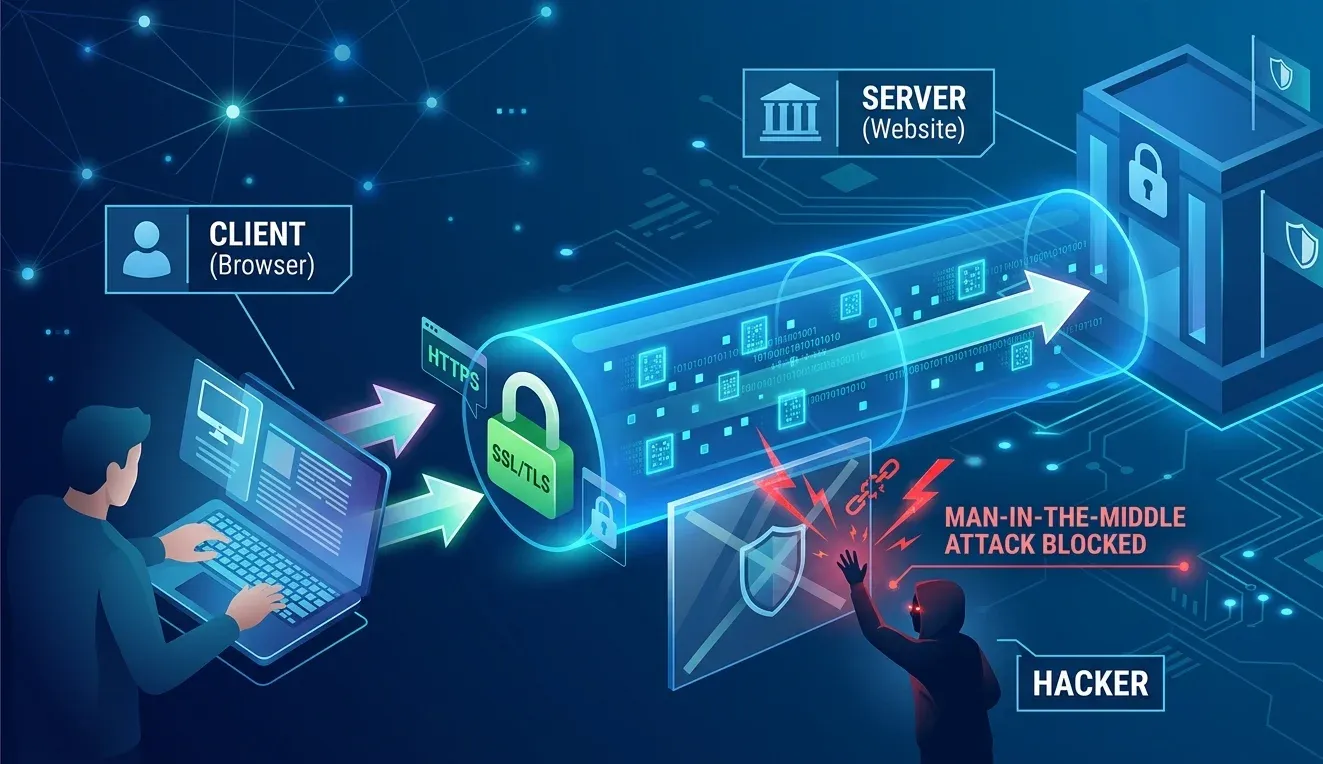

Use HTTPS and Encrypted Communication

Encryption is essential for protecting data as it travels between clients and APIs. Without secure communication channels, sensitive information such as credentials, tokens, or personal data can be intercepted by attackers.

Using HTTPS ensures that all traffic is encrypted, making it far more difficult for malicious actors to exploit network vulnerabilities.

Every API should enforce TLS encryption across all endpoints. Relying on unsecured HTTP connections exposes users to risks like credential theft and man‑in‑the‑middle attacks, where attackers intercept and manipulate traffic.

Proper certificate management is equally important, as expired or misconfigured certificates can weaken security and erode trust.

TLS Encryption for All API Traffic

TLS is used to make sure requests and responses remain confidential and tamper-proof. Encryption protects data from being read or altered while in transit between client and server.

It also helps maintain integrity by ensuring that the data received is exactly what was sent.

Enforcing TLS across all endpoints removes any chance of accidental exposure through unprotected routes.

Avoiding Unsecured HTTP Endpoints

Eliminates weak links that attackers can exploit to intercept sensitive data. Even a single unsecured endpoint can expose credentials or tokens if accessed over HTTP.

Redirecting all traffic to HTTPS ensures consistent protection across the application. Disabling HTTP entirely prevents fallback scenarios that attackers often take advantage of.

Certificate Management

Involves keeping certificates updated, properly configured, and monitored to maintain trust and security. Expired certificates can disrupt service and reduce user confidence in the system.

Misconfigured certificates may lead to vulnerabilities or failed encryption checks. Automated renewal and regular validation help ensure that encryption remains effective without interruptions.

Preventing Man‑in‑the‑Middle Attacks

Protects users from interception attempts by ensuring encrypted communication and verified certificates. Attackers often try to position themselves between the client and server to capture or alter data.

Strong TLS configurations and certificate validation prevent unauthorized entities from accessing the communication channel. Additional measures like certificate pinning can further strengthen protection in high-risk environments.

By enforcing HTTPS and maintaining strong encryption practices, organizations can safeguard data in transit and significantly reduce the risk of exposure.

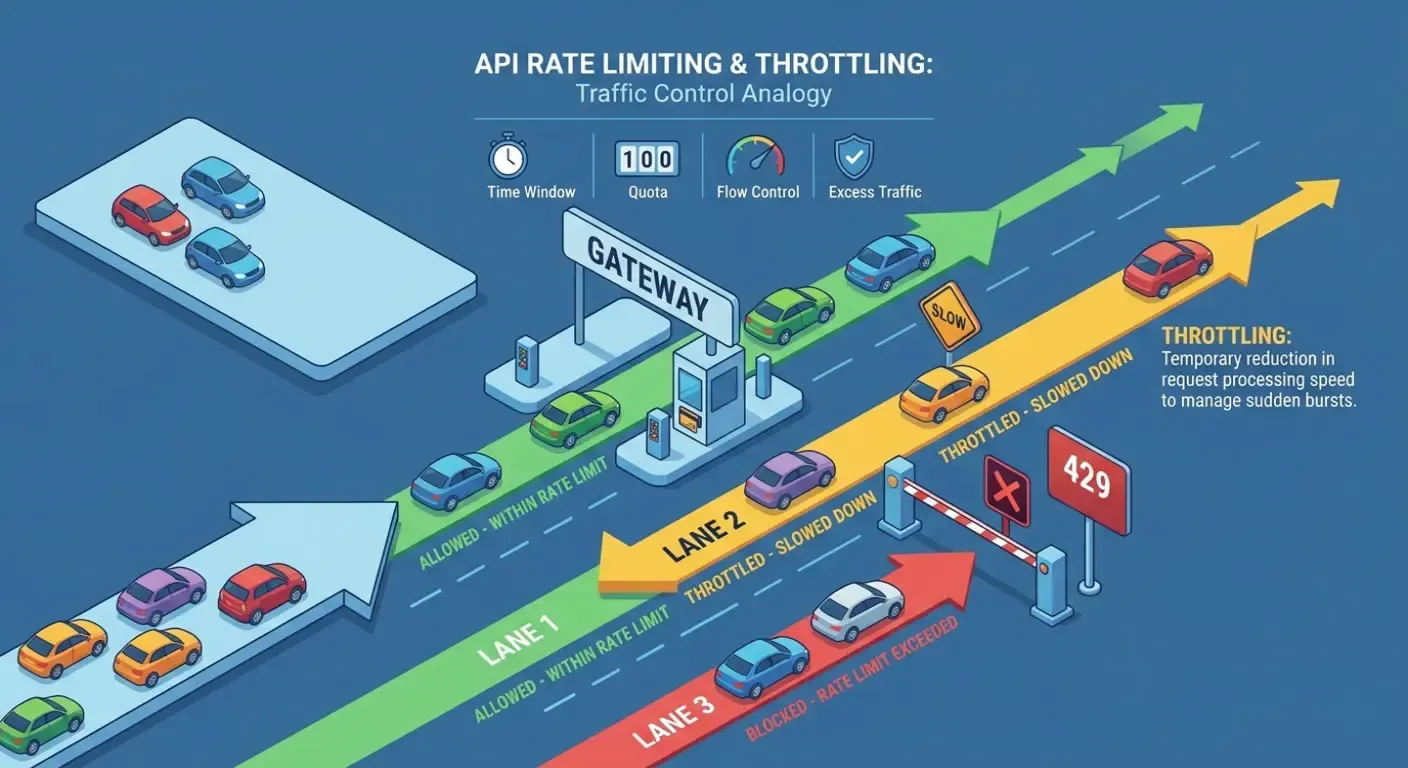

Implement Rate Limiting and Throttling

APIs are designed to handle requests efficiently, but without proper controls, they can be overwhelmed by excessive or malicious traffic.

Attackers often exploit this by sending large volumes of requests to degrade performance or launch denial‑of‑service attacks.

Even legitimate users can unintentionally overload systems if there are no safeguards in place.

Rate limiting and throttling provide a structured way to manage traffic, ensuring that APIs remain available and responsive.

By defining limits on how many requests a client can make within a given timeframe, organizations can protect infrastructure from abuse while maintaining fair access for all users.

These measures also help detect unusual traffic patterns that may indicate automated attacks. Implementing them consistently across endpoints is essential for both security and stability.

Request Rate Limiting

RRL sets a maximum number of requests allowed per client in a specific time window.

This helps prevent abuse by limiting how frequently an API can be accessed. It is especially useful against brute-force attempts and automated scripts.

By controlling request frequency, systems can maintain stability under heavy usage.

Throttling Policies

When APIs receive too many requests in a short time, performance can quickly degrade.

Throttling helps manage this by slowing down or temporarily limiting clients that exceed defined thresholds.

This allows legitimate users to recover while discouraging aggressive usage patterns. It also helps protect backend services from sudden strain.

Request Quotas

In environmens where multiple users rely on the same API, controlled resource allocation is critical.

Request quotas allocate fixed limits to users or applications, ensuring resources are disributed fairly.

Quotas are often defined early on a aily or a monthly basis, depending on the usage plans.

They help prevent a single user from consuming excessive resources at the expense of others.

This approach is commonly used in public APIs with tiered access levels.

Burst Control

Sudden spikes in the traffic is very common. Burst control manages these increases by allowing short bursts but enforcing stict limits afterwards.

This is very useful for handling temporary increases in demand without immediately blocking requests.

Once the burst threshold is exceeded, strict limitiations are applied to stabilize the system.

Validate and Sanitize All Input Data

APIs interact directly with user‑supplied data, which makes them highly vulnerable to malicious input. Attackers often exploit weak validation to inject harmful commands or payloads, leading to compromised databases, unauthorized system actions, or data leaks.

Proper validation and sanitization act as a protective filter, ensuring that only safe and expected data passes through.

A strong input validation strategy checks every request against defined rules and schemas. It prevents oversized payloads, unexpected formats, or dangerous characters from reaching the backend.

Sanitization further cleans user input, removing or neutralizing harmful elements before they can cause damage. Together, these practices reduce the risk of injection attacks and strengthen the reliability of API interactions.

Input Validation

Ensures that incoming data matches expected formats, types, and allowed ranges before it is processed. This reduces the risk of unexpected behavior caused by malformed or malicious inputs.

Validation should be applied to all parts of a request, including query parameters, headers, and body data. Consistent validation helps maintain application stability and prevents misuse of API logic.

Schema Validation

To maintain consistency and security in incoming data, schema validation enforces strict rules for request structures, rejecting malformed or unauthorized fields.

By defining a clear schema, APIs can ensure that only expected data is accepted.

This prevents attackers from injecting extra fields or manipulating request structures. It also improves reliability by making request handling more predictable.

Payload Size Restrictions

Large or unregulated requests can quickly become a security and performance risk.

Payload size restrictions block excessively large requests that could overwhelm systems or conceal malicious content.

Limiting payload size helps prevent denial-of-service attempts that rely on sending huge amounts of data.

It also reduces the risk of hidden payloads carrying harmful scripts or commands. Setting reasonable limits ensures efficient processing without compromising security.

Sanitizing User Input

User-provided data is one of the most common entry points for attacks. Sanitizing user input removes harmful characters or scripts before processing user-provided data.

This step is essential when inputs are used in queries, commands, or rendered outputs.

Proper sanitization reduces the chances of attackers injecting malicious code into the system. It acts as a defensive layer alongside validation to ensure safer data handling.

Attacks Prevented by Proper Input Handling

SQL Injection

Database-driven applications are frequent targets for input-based attacks.

SQL injection prevents attackers from inserting malicious SQL queries that can access, modify, or delete database data.

Proper validation and sanitization ensure that inputs are treated strictly as data, not executable queries.

Command Injection

APIs that interact with system-level processes can introduce critical vulnerabilities if not handled carefully.

Command injection stops attackers from executing system-level commands through API inputs. By restricting and sanitizing inputs, APIs can avoid passing unsafe data to the underlying operating system.

Script Injection

When API responses are rendered in web applications, untrusted input can become a serious risk.

Script injection protects against the insertion of malicious scripts, especially in such cases.

Sanitized inputs ensure that scripts are not executed in the client environment.

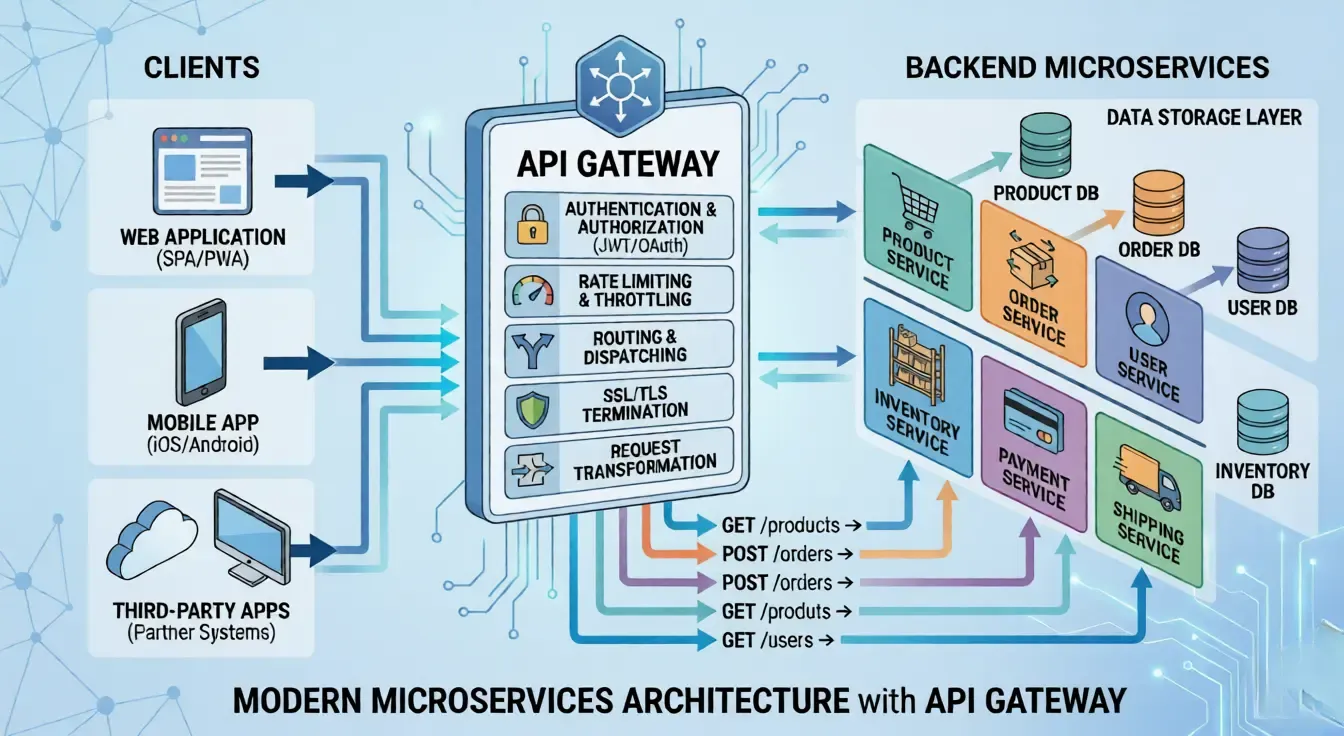

Use API Gateways for Centralized Security

Managing API security across multiple services can quickly become complex. Each endpoint may require its own authentication, monitoring, and traffic controls, which increases the risk of inconsistencies and overlooked vulnerabilities.

API gateways solve this challenge by acting as a single entry point for all API traffic, allowing organizations to enforce security policies centrally.

By routing requests through a gateway, teams gain visibility and control over how APIs are accessed and used.

Gateways simplify the implementation of security measures, reduce duplication of effort, and provide a consistent layer of protection across diverse microservices and integrations.

They also make it easier to detect anomalies and enforce compliance requirements.

By consolidating these functions, API gateways become a key part of modern architectures. They allow teams to apply consistent security rules while keeping backend services focused on business logic rather than defensive checks.

Monitor API Traffic and Implement Logging

Even with strong authentication, authorization, and encryption in place, APIs remain vulnerable if activity is not continuously monitored. Attackers often attempt to bypass defenses by exploiting overlooked endpoints or by mimicking legitimate traffic patterns.

Monitoring and logging provide visibility into how APIs are being used across a cloud based API backend, helping teams detect anomalies, investigate incidents, and maintain accountability.

Effective monitoring captures details such as request frequency, source IPs, and unusual access attempts. Logging ensures that every interaction is recorded, creating a trail that can be analyzed during audits or security reviews.

Together, these practices not only strengthen security but also support compliance with industry regulations and standards.

Traffic Monitoring

Without clear visibility into API activity, identifying misuse becomes difficult.

Traffic monitoring tracks usage patterns, highlighting suspicious spikes or irregular behavior.

Sudden increases in requests from a single source or unusual access times can indicate potential abuse.

Continuous observation helps teams understand normal usage patterns and quickly identify deviations. This visibility allows a faster response to emerging threats.

Comprehensive Logging

When issues arise, having a detailed record of API activity is essential for investigation.

Comprehensive logging records all requests and responses, providing a reliable audit trail for investigations.

Logs should include details such as timestamps, user identity, endpoints accessed, and response status.

This information is critical when tracing the source of an issue or analyzing a breach. Proper log management also ensures that sensitive data is not unnecessarily exposed.

Anomaly Detection

Not all threats are obvious, making pattern-based detection crucial. Anomaly detection identifies unusual activity, such as repeated failed login attempts or abnormal data queries.

By comparing current behavior with established patterns, systems can flag potential threats early.

This helps in detecting both automated attacks and subtle misuse by legitimate users. Early detection reduces the impact of security incidents.

Alerting Systems

Detecting a threat is only useful if teams can act on it quickly. Alerting systems notify teams in real time when potential threats or breaches are detected.

Alerts can be triggered by predefined rules, such as high error rates or multiple failed authentication attempts.

Timely notifications allow teams to respond before issues escalate. Well-configured alerts reduce response time and improve overall incident handling.

Compliance Support

Regulatory requirements make visibility into API activity more than just a best practice.

Compliance support ensures that organizations meet regulatory requirements by maintaining detailed records of API activity.

Many standards require visibility into who accessed what data and when.

Proper logging and monitoring make it easier to generate reports and demonstrate compliance. This also helps build trust with users and stakeholders.

By combining monitoring with detailed logging, organizations gain the visibility needed to respond quickly to threats and maintain trust in their API ecosystem.

Implement Versioning and Deprecation Policies

APIs evolve as new features are added, bugs are fixed, and security improvements are introduced. Without proper versioning, changes can break existing integrations and frustrate developers who rely on stable endpoints.

Implementing clear versioning and deprecation policies ensures that updates are rolled out smoothly while giving users time to adapt.

Versioning provides a structured way to introduce changes without disrupting current clients. Deprecation policies communicate which features or endpoints will be retired, allowing developers to plan migrations.

Together, they maintain trust and stability while enabling innovation.

Versioning Strategy

A versioning strategy defines how new API versions are introduced, such as using version identifiers like v1, v2, or including versions in headers.

This separation allows teams to release new functionality without affecting existing clients.

A clear strategy helps avoid confusion and ensures consistency across services.

It also makes it easier to manage multiple active versions over time.

Backward Compatibility

Frequent updates can create issues if existing integrations are not preserved.

Backward compatibility ensures that existing clients continue to function even as new versions are introduced.

Maintaining compatibility allows developers to upgrade at their own pace instead of being forced into immediate changes. It also improves overall user experience by minimizing disruptions.

Deprecation Notices

Changes in APIs require clear communication to avoid unexpected disruptions. Deprecation notices inform developers in advance about features or endpoints that will be removed. These notices should include clear timelines and guidance on alternative solutions.

Early communication helps users plan and avoid last-minute issues. It also reduces frustration by providing transparency around API changes.

Grace Periods

Immediate transitions can be difficult for users relying on existing integrations.

Grace periods provide sufficient time for users to transition from older versions to newer ones.

During this period, both versions may run in parallel to support ongoing operations.

This approach prevents sudden service interruptions and allows for smoother migration. A well-defined grace period ensures that users are not caught off guard.

Documentation Updates

Clear guidance becomes essential whenever APIs undergo changes. Documentation updates keep developers informed about changes, migration steps, and timelines.

Up-to-date documentation reduces confusion and helps users adopt new versions more efficiently.

It should clearly highlight differences between versions and provide practical examples. Good documentation plays a key role in maintaining trust and usability.

Unmanaged API versions create security gaps by leaving outdated endpoints active without proper oversight. When older versions are not tracked or retired, they often continue running with known vulnerabilities, weaker authentication methods, or outdated validation logic. Attackers actively look for these legacy endpoints because they are less likely to have the same level of protection as newer versions.

By enforcing versioning and deprecation policies, organizations can balance innovation with reliability, ensuring that APIs remain secure and developer‑friendly.

Secure Third‑Party API Integrations

Modern applications often rely on third‑party APIs to extend functionality, whether for payments, analytics, or communication services. While these integrations add value, they also introduce external dependencies that can become security risks if not properly managed.

A vulnerable third‑party API can expose sensitive data or provide attackers with a backdoor into your systems.

Securing these integrations requires careful vetting and ongoing monitoring. Organizations should evaluate the security posture of third‑party providers, enforce strict access controls, and continuously review how external APIs interact with internal systems.

This ensures that convenience does not come at the cost of compromised security.

Vendor Security Assessment

Evaluates the provider’s security practices, certifications, and compliance with industry standards. This includes reviewing how they handle data protection, incident response, and access controls.

A thorough assessment helps identify potential risks before integration begins. Regular reassessments ensure that vendors continue to meet security expectations over time.

Access Control Restrictions

Limit what third-party APIs can access, ensuring they only interact with necessary data or functions. Applying the principle of least privilege reduces the impact of a potential compromise.

Permissions should be clearly defined and reviewed periodically. Restricting access prevents unnecessary exposure of sensitive resources.

Token Management

Enforces secure authentication for external APIs using tokens with defined scopes and expiration. Tokens should be rotated regularly to reduce the risk of misuse if they are exposed.

Limiting token permissions ensures that access is controlled and specific to required actions. Secure storage and transmission of tokens are essential to prevent leaks.

Traffic Monitoring

Tracks how third-party APIs are used, detecting anomalies or suspicious activity. Unusual request patterns or unexpected data access can indicate misuse or compromise.

Continuous monitoring helps identify issues early and take corrective action. Visibility into external interactions strengthens overall security awareness.

Contractual Security Clauses

Ensure providers are legally bound to maintain strong security practices and notify organizations of any breaches. Contracts should define responsibilities around data protection, incident reporting, and compliance requirements.

Clear agreements help enforce accountability and reduce ambiguity during security incidents. This adds an extra layer of protection beyond technical controls.

External APIs can introduce vulnerabilities because they extend your system beyond boundaries you directly control. When an application depends on a third-party service, it inherits part of that service’s security posture.

If the external API has weak authentication, poor input validation, or misconfigured endpoints, attackers may exploit those weaknesses to access your data or disrupt your application.

By treating third‑party APIs with the same rigor as internal ones, organizations can enjoy extended functionality without exposing themselves to unnecessary risks.

Regularly Perform Security Testing

Even with strong policies and controls in place, APIs must be continuously tested to ensure they remain secure against evolving threats.

Attackers constantly look for new vulnerabilities, and without regular testing, organizations risk leaving hidden weaknesses unaddressed. Security testing validates that defenses are working as intended and helps identify gaps before they can be exploited.

Testing should be integrated into the development lifecycle, not treated as a one‑time activity. By combining automated tools with manual reviews, teams can uncover both common and complex vulnerabilities.

Regular testing also builds confidence that APIs comply with industry standards and can withstand real‑world attack scenarios.

Penetration Testing

Simulates real attacks to expose weaknesses in authentication, authorization, and input handling. Security professionals attempt to exploit the API in controlled environments to identify vulnerabilities.

This approach helps uncover issues that automated tools may miss. It provides practical insights into how an attacker might interact with the system.

Automated Vulnerability Scanning

Quickly identifies known flaws in code, libraries, and configurations. These tools scan APIs for common vulnerabilities such as outdated dependencies or misconfigurations.

They are useful for frequent checks and can be integrated into development workflows. Automated scanning ensures consistent coverage without requiring manual effort every time.

Fuzz Testing

Sends random or malformed inputs to detect unexpected behavior or crashes. This helps identify edge cases where the API may fail or behave unpredictably.

Fuzz testing is effective in uncovering hidden bugs that standard testing might not reveal. It strengthens the API by ensuring it can handle unusual or invalid inputs safely.

Regression Testing

Ensures that new updates or patches do not reintroduce old vulnerabilities. Every time changes are made, previously fixed issues must be rechecked.

This prevents security gaps from reappearing due to code modifications. It also maintains stability while allowing continuous improvements.

Continuous Integration Security Checks

Embed testing into the CI/CD pipeline. Integrating security checks into CI/CD pipelines allows teams to detect vulnerabilities automatically with every code change, reducing manual effort and improving consistency.

This approach shifts security closer to development, making it easier to fix issues quickly. It also promotes a culture of shared responsibility for security.

API environments are constantly changing due to new features, updates, and integrations. Each change introduces the possibility of new vulnerabilities, even if existing controls are strong. Occasional testing may miss these gaps, leaving systems exposed for long periods.

Continuous testing ensures that security is evaluated at every stage, from development to deployment. It allows teams to detect and fix issues early, reducing both risk and cost.

Follow the OWASP API Security Top 10 Guidelines

The OWASP API Security Top 10 is a globally recognized framework that highlights the most critical risks facing APIs. Following these guidelines ensures that organizations address the most common and dangerous vulnerabilities, reducing the likelihood of breaches.

They serve as a roadmap for developers and security teams to prioritize defenses and build APIs that are resilient against real‑world threats. By aligning with OWASP, teams can standardize their security practices, improve awareness, and meet compliance requirements.

These guidelines are updated to reflect evolving attack patterns, making them a reliable foundation for long‑term API protection.

Broken Object Level Authorization

Highlights the need for strict checks to prevent unauthorized access to data objects. APIs must verify that users can only access resources they own or are permitted to view.

Failing to enforce these checks can allow attackers to retrieve or modify other users' data. Consistent validation at the object level is essential to prevent data leaks.

Broken Authentication

Emphasizes strong authentication mechanisms to stop attackers from impersonating users. Weak authentication flows or poorly managed tokens can be exploited to gain unauthorized access.

Secure session handling and proper credential protection are critical. Regular reviews of authentication logic help prevent misuse.

Excessive Data Exposure

Warns against returning unnecessary or sensitive data in API responses. APIs should only return the data required for a specific request, nothing more. Exposing extra fields can give attackers insights into the system or access to confidential information.

Careful response filtering reduces this risk.

Lack of Resources & Rate Limiting

Stresses the importance of controlling API usage to prevent abuse and service disruption. Without limits, attackers can flood APIs with requests, causing performance issues or downtime.

Rate limiting helps manage traffic and ensures fair usage. It also protects backend systems from overload.

Broken Function Level Authorization

Requires enforcing permissions consistently across all functions and endpoints. Even if users are authenticated, they should not be able to perform actions outside their role.

Missing checks at the function level can allow privilege escalation. Proper validation ensures that each action is authorized.

Mass Assignment

Cautions against automatically binding user input to system objects without validation. Attackers can include unexpected fields in requests to modify sensitive properties.

This can lead to unauthorized changes in the system. Explicitly defining allowed fields helps prevent such manipulation.

Security Misconfiguration

Highlights the risks of default settings, incomplete configurations, or exposed endpoints. Misconfigured servers, open ports, or unnecessary services can create easy entry points for attackers.

Regular audits and secure defaults reduce these risks. Configuration management plays a key role in maintaining security.

Injection Attacks

Focus on preventing malicious input from compromising databases or systems. Attackers may inject harmful code into API inputs to execute unintended commands.

Proper validation and sanitization are essential to block these attempts. Using safe query methods further reduces exposure.

Improper Assets Management

Stresses the need to track and secure all API versions and endpoints. Unused or outdated APIs can remain exposed without proper protection. Attackers often target these forgotten endpoints as they are less monitored. Maintaining an up-to-date inventory helps close these gaps.

Insufficient Logging & Monitoring

Without proper visibility, attacks can go unnoticed until significant damage has already occurred. Logging keeps a detailed record of API activity, while monitoring helps identify unusual patterns early.

Without these measures, detecting and responding to threats becomes much harder. Strong visibility allows teams to act quickly and reduce the impact of potential incidents.

By embedding these OWASP principles into API design and maintenance, organizations can systematically reduce risks and strengthen their overall security posture.

Build API Security Into the Development Lifecycle

API security cannot be treated as an afterthought or a separate process. To be effective, it must be integrated directly into the development lifecycle, ensuring that security considerations are addressed from design through deployment.

This approach reduces the likelihood of vulnerabilities slipping into production and makes security a natural part of building and maintaining APIs.

By embedding security practices into each stage, teams can identify risks early, enforce consistent standards, and create APIs that are resilient by design.

This proactive mindset also fosters collaboration between developers and security teams, ensuring that functionality and protection evolve together.

Secure API Design

Security should begin at the design stage, where decisions around authentication, authorization, and data exposure are made. Designing APIs with least-privilege access and clear data boundaries helps prevent misuse later.

Early planning also ensures that sensitive operations are protected by default.

A strong design foundation reduces the need for major security changes after development.

Security Reviews During Development

Regular security reviews help identify issues while the code is still being written. These reviews can include code inspections, peer reviews, and architecture evaluations.

Catching vulnerabilities early makes them easier and less expensive to fix.

It also ensures that security standards are consistently followed across the project.

Automated Security Checks in Pipelines

Integrating security checks into CI/CD pipelines allows teams to detect vulnerabilities automatically with every code change. These checks can include dependency scanning, static analysis, and configuration validation.

Automated testing ensures consistent coverage without slowing down development. It also prevents insecure code from being deployed.

Security-First Development Culture

A security-first mindset encourages developers to think about risks as part of their daily work. This includes following secure coding practices, staying aware of common threats, and taking ownership of security outcomes.

Training and clear guidelines help reinforce this approach. When security becomes part of the culture, it leads to better decisions at every stage of development.

By building security into the lifecycle, organizations move from reactive fixes to proactive resilience, ensuring APIs remain safe, reliable, and aligned with business needs.

Conclusion

APIs have become the backbone of modern software systems, enabling communication between applications, services, and users at every level.

Their widespread use has made them essential for building connected and efficient platforms, but it has also increased the importance of securing them properly. As APIs continue to handle critical operations and sensitive data, protecting them is no longer optional.

Security must be implemented at every stage of development, from initial design to deployment and ongoing maintenance. Practices such as strong authentication, proper authorization controls, continuous monitoring, and regular security testing form the foundation of a secure API ecosystem.

Each layer plays a role in reducing risk and ensuring that APIs function safely under real-world conditions.

API security is not a one-time implementation but an ongoing process. As systems evolve and new threats emerge, security measures must be reviewed, updated, and improved continuously.

Organizations that treat API security as a continuous effort will be better equipped to protect their systems, maintain trust, and adapt to changing challenges.