MVP Cost Breakdown for 2026: A Complete Guide

Ready to scale beyond your MVP?

The technical scaling playbook for founders growing MVPs into production systems.

MVP development cost in 2026 typically ranges between $5,000 and $150,000+, depending on product complexity, team structure, and technical requirements. Simple MVPs can be built in a few weeks using lean tools and minimal infrastructure, while complex, compliance-heavy or AI-integrated products require months of development and significantly higher investment.

Building an MVP without a structured cost framework is one of the fastest ways to misallocate capital. Development teams start broad, scope expands quietly, and budgets collapse before the product reaches its first real user.

What Is an MVP and Why Does the Definition Affect Your Budget?

A Minimum Viable Product (MVP) is the smallest functional version of a product that can deliver value to early users and generate evidence to inform the next development decision. The operative word is "viable," not minimal in quality but minimal in scope relative to what needs to be tested.

This distinction has direct budget implications. A product team building a feature set based on assumptions about what users need will always overbuild. One building toward a defined hypothesis and committing to building only what tests that hypothesis will spend less and learn faster.

MVP vs Prototype vs PoC: A Budget-Critical Distinction

These three terms are frequently used interchangeably in planning conversations, but they carry different cost profiles and serve different strategic purposes.

A PoC answers, "Can we build this?" A prototype answers, "Do users understand this?" An MVP answers, "Will users pay for this, return to it, or recommend it?" Misidentifying which artifact you actually need is one of the most common causes of MVP budget overruns; teams build a full MVP when a PoC or prototype would have answered the question at a fraction of the cost.

Dropbox validated demand with a demo video before writing a line of production code. Airbnb tested their core hypothesis with a basic website and real listings before investing in platform infrastructure. The evidence required to make the next decision does not always require a production-ready MVP.

How Much Does It Cost to Build an MVP in 2026?

MVP development costs in 2026 span a wide range depending on product type, team structure, and technical scope. The realistic range runs from $5,000 for a no-frills prototype to over $150,000 for a feature-rich, production-ready product. Understanding which tier your product falls into is the first planning decision, not the last.

MVP Cost Overview by Complexity

The difference between tiers is not feature volume it is architectural depth. A simple MVP can be assembled from existing tools and minimal custom code. A complex MVP requires custom infrastructure, compliance-grade security, third-party integrations, and a QA cycle rigorous enough to support real business operations from day one.

Insight: The most common budgeting mistake is treating an MVP as a simplified version of a full product. An MVP is a validation vehicle cost should align with the evidence you need to collect, not the product you eventually want to ship.

MVP Development Cost by Industry Vertical

Product category shapes cost as significantly as product complexity. The compliance requirements, integration depth, and user experience standards vary substantially across verticals, and each of those variables carries a cost implication.

FinTech and healthcare MVPs carry the highest cost floors because compliance is structural, not optional. A FinTech MVP that skips PCI-DSS alignment or a healthcare app that ignores HIPAA will not be deployable to paying customers regardless of technical quality.

Why MVP Budget Planning Is Critical for Cost Control and Product Success

Founders often approach MVP budgeting as a cost-control exercise. The more useful frame is risk-adjusted capital deployment: how much investment is required to generate evidence that de-risks the next funding or development decision.

Resource Allocation and Feature Prioritization

Every dollar spent on an MVP feature is a dollar not spent on user research, go-to-market, or the iteration that follows launch. Feature prioritization directly determines whether development spend produces strategic clarity or just more software.

Teams that allocate budget based on assumption rather than validated user need consistently overbuild their MVPs. The result is a product that costs significantly more than planned and delivers less evidence than expected. Budget allocation must trace back to specific hypotheses being tested.

Setting Realistic Timelines and Expectations

Timeline and budget are not independent variables. Compressing the timeline of a complex MVP increases cost through overtime, parallel workstreams, and reduced QA cycles. Extending the timeline without scope control increases burn rate without proportional value gain.

The correct approach is to set a fixed hypothesis budget, the minimum spend required to test a defined set of product assumptions, and build the timeline around that scope. Every timeline decision should be framed against its impact on burn rate and validation cycles.

Risk Mitigation and Iterative Validation

An MVP that costs $80,000 to build but takes 10 months to reach users is not low-risk. Risk in MVP development is a function of time-to-evidence, not just cost-to-build. The longer it takes to get real user feedback, the more the product is built on assumption.

Structuring development into short validation cycles, each with defined success metrics, limits the financial exposure of building in the wrong direction. This is why iterative delivery models outperform waterfall approaches in early-stage product budgets.

Designing for Scalability from Day One

Scalability is not a post-MVP concern. Architectural decisions made at the MVP stage, such as database design, API structure, and authentication model, either support or obstruct the technical roadmap that follows.

Rebuilding a poorly designed MVP is consistently more expensive than building a scalable foundation upfront. The cost delta between a throwaway MVP architecture and a production-grade one is often 15-25% of total development cost, a cost that eliminates itself in the first scaling cycle.

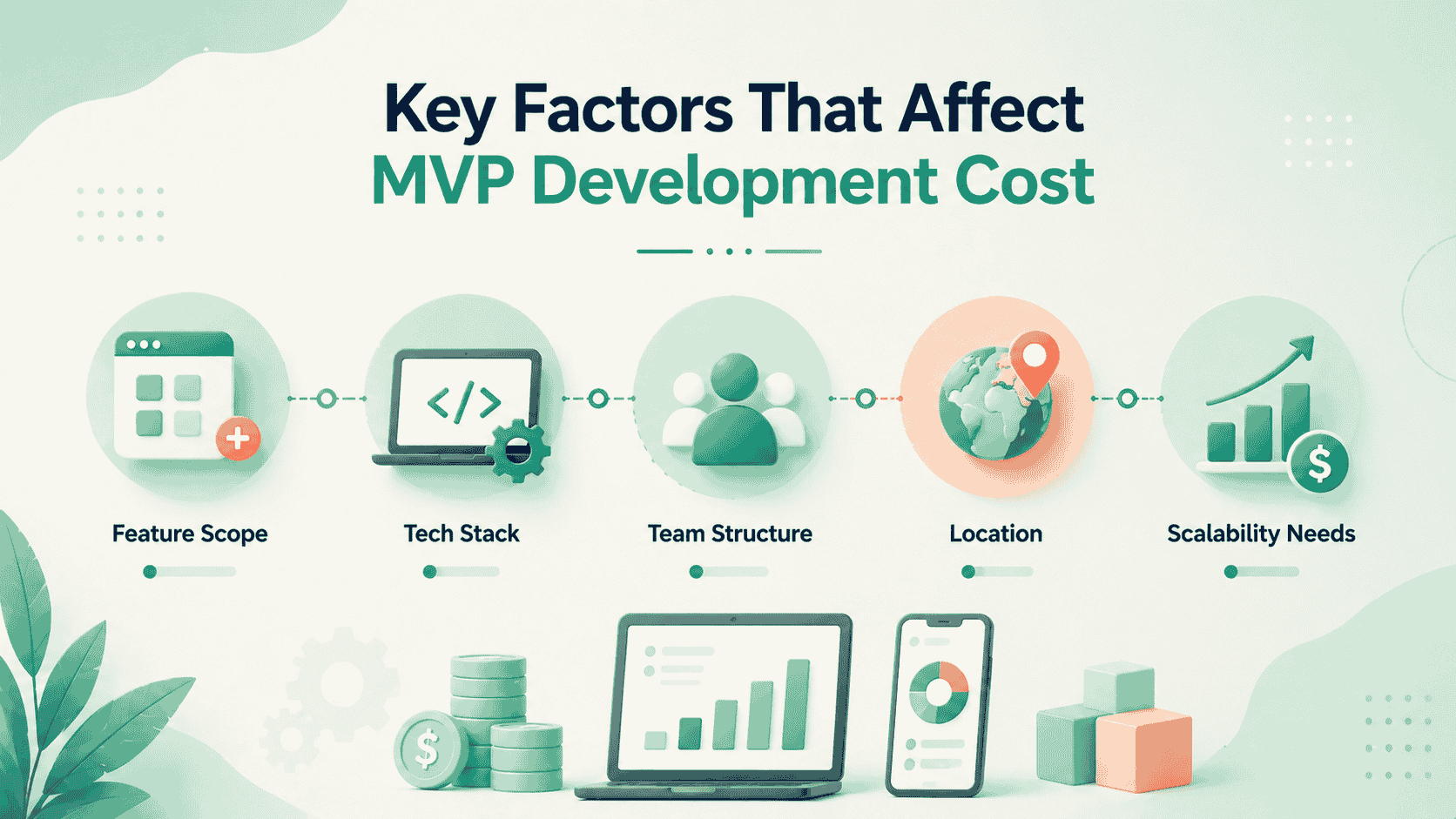

Key Factors That Influence MVP Development Costs

No two MVPs carry the same cost profile. The variables below determine where your project lands within any given cost range and which ones you can control.

Product Complexity and Feature Scope

Feature scope is the single largest cost driver in MVP development. Every additional feature adds design, development, integration, and testing work. A product with five core features does not cost five times more than a one-feature product. Dependencies between features compound development time nonlinearly.

The discipline of scope control, building what tests the hypothesis and nothing more, is the most effective cost management tool available to a product team. Teams that defer non-essential features consistently deliver MVPs on budget.

Technology Stack and Architecture

Stack selection affects both development velocity and cost. Well-supported frameworks with large developer communities, such as React, Node.js, Python, and Flutter, carry lower implementation costs because talent is abundant and tooling is mature, though the choice between them carries long-term architectural consequences worth evaluating against your product type.

Niche or highly specialized stacks reduce the available talent pool and increase hourly rates. Architecture decisions have a compounding effect on cost. Microservices architectures offer scalability but add DevOps overhead that is unnecessary for most MVPs.

A well-structured monolith is typically the correct choice for early-stage products, with service decomposition reserved for proven, high-growth components.

Cost Comparison between Web, Mobile, and Cross-Platform

For most MVPs, cross-platform development or a web-first approach is the cost-effective choice, based on how Flutter and React Native compare in terms of performance, ecosystem, and long-term maintenance.

Native development should be reserved for products where hardware access, performance requirements, or App Store presence are validated business requirements, not preferences.

Development Team Structure

A full-stack MVP team typically includes a project manager, one or two full-stack developers, a UI/UX designer, and a QA engineer. Expanding the team beyond this baseline for an MVP-stage product increases coordination overhead without proportional output gains.

Team structure also affects how cost scales with scope changes. Larger teams have higher fixed weekly costs; smaller teams are more efficient for tightly scoped MVPs but can bottleneck if scope expands mid-project.

MVP Developer Cost by Location Hourly Rates by Region

Location-based cost differences are significant, but the more important variable is output quality relative to rate. A development team at $40/hour that requires three revision cycles delivers less value than a team at $60/hour that delivers accurately scoped, well-documented code on the first pass.

UI/UX Design Complexity Cost

Design complexity is frequently underestimated in MVP budgets. Basic UI with standard component libraries costs $2,000-$8,000. Custom design systems with original visual identity, complex user flows, and multiple device breakpoints cost $10,000-$30,000 or more.

For most MVPs, investing in functional UX clarity over visual polish is the correct tradeoff. Users forgive aesthetic limitations more readily than they forgive confusing workflows. The design budget should be allocated to interaction logic first and visual refinement second.

Cost of Third-Party Integrations and APIs

Each integration of payment gateways, authentication providers, CRM platforms, mapping services, and analytics SDKs adds development, testing, and maintenance costs. A product requiring three to five integrations adds an estimated $5,000-$20,000 to the total development cost depending on API complexity and documentation quality.

Integration cost is often invisible in early estimates because it surfaces during development rather than scoping. Teams that inventory integrations upfront and assess the quality of third-party documentation before committing budget are more accurate.

Security and Compliance Requirements

Standard security practices, encrypted storage, secure authentication, and HTTPS enforcement are baseline and should be included in any MVP budget. Products handling financial data, health records, or personally identifiable information at scale carry additional compliance obligations.

GDPR-compliant data handling, SOC 2 alignment, and HIPAA-compliant infrastructure add $5,000 to $25,000+ to MVP development costs depending on implementation depth. Treating compliance as a post-launch concern is a structural risk retrofitting security architecture after deployment consistently costs more than building it correctly from the outset.

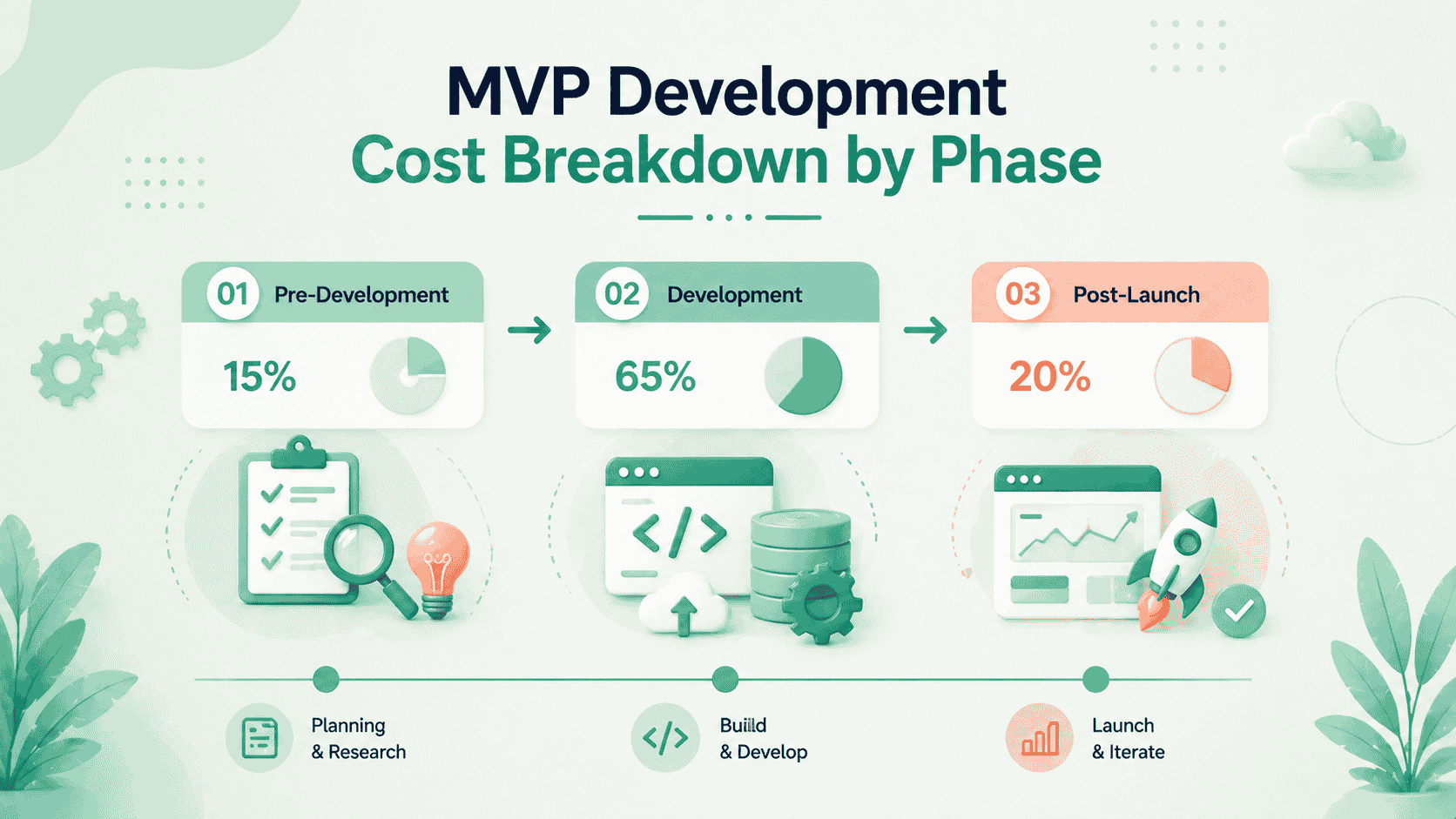

MVP Development Cost Breakdown by Phase

MVP development costs are not evenly distributed. Each phase carries a different cost profile, and underestimating even one of them can distort the entire budget. Understanding how investment shifts across these stages is essential for accurate planning.

Pre-Development Cost (Research, Validation, and Design)

Before any code is written, cost is driven by clarity. This phase includes market research, user interviews, competitive analysis, product requirement definition, wireframing, and UX prototyping.

In most MVPs, pre-development typically accounts for $3,000 to $25,000 of the total budget and is completed within a few weeks, depending on research depth and stakeholder alignment. Despite being the lowest-cost phase, its impact on overall efficiency is disproportionately high.

Teams that skip structured validation often compensate by overspending during development, building features that do not map to real user needs. Industry data shows that a significant percentage of startups fail due to lack of market demand, a risk this phase is designed to eliminate early.

Spending a fraction of the budget validating assumptions is consistently more cost-efficient than correcting a misaligned product after development begins.

MVP Development Cost (Frontend, Backend, QA, Integrations)

This phase represents the largest share of MVP investment, typically ranging from $15,000 to $120,000, with timelines extending from several weeks to multiple months depending on product complexity.

It includes frontend implementation, backend system design, API development, database architecture, third-party integrations, and quality assurance. Cost does not scale linearly with features; it increases based on dependencies, integrations, and system complexity.

A typical cost distribution within this phase follows a structured pattern:

- Frontend implementation: 25-30%

- Backend systems and APIs: 35-45%

- Third-party integrations: 10-20%

- QA and testing: 15-20%

Quality assurance is often under-allocated, but reducing QA spend increases downstream costs through bug fixes, performance issues, and delayed releases.

The execution model also affects cost efficiency. Agile sprint cycles keep development transparent and measurable, allowing teams to track budget consumption in real time and adjust scope before overruns occur.

Post-Launch MVP Costs (Maintenance, Scaling, Infrastructure)

Once the MVP is live, costs shift from development to operations. Post-launch expenses typically range from $2,000 to $15,000 per month, depending on infrastructure usage, user growth, and iteration velocity.

This phase includes hosting, monitoring, bug resolution, performance optimization, and ongoing feature improvements driven by user feedback. Unlike development, these are recurring costs that scale with product adoption.

Post-launch is the most consistently underestimated part of MVP budgeting. Teams often allocate heavily to development but leave minimal budget for maintenance, creating a gap that leads to technical debt and stalled product growth.

A more effective approach is to treat post-launch investment as a continuation of the MVP lifecycle. Allocating a portion of the original budget toward iteration and infrastructure ensures the product remains stable, scalable, and aligned with user needs.

Most MVP budgets fail not during development, but after launch when ongoing costs are underestimated or ignored.

MVP Development Cost Based on Team Structure

The team model you choose shapes not only development cost but also quality, communication overhead, and long-term flexibility.

Team Model Comparison

In-House MVP Development Cost and When to Choose It

In-house teams offer the highest level of product context, communication quality, and intellectual property control. Because the team operates within the company, decision-making is faster and alignment with long-term product vision is stronger.

However, this model introduces significant fixed overhead. Beyond development, costs extend to hiring, onboarding, infrastructure, and ongoing team management. This makes it the least flexible option in early-stage environments where requirements are still evolving.

In-house development is most effective after product-market fit, when the roadmap is stable and continuous iteration is required. Before that stage, the capital commitment often outweighs the benefits of full control.

Freelancer MVP Development Cost and Risk Factors

Freelancers are best suited for clearly defined, isolated tasks. They provide flexibility and can reduce upfront cost when the scope is limited and well-documented.

The challenge emerges when multiple freelancers are involved across different parts of the product. Without a unified process, coordination becomes fragmented, and inconsistencies in code quality, documentation, and timelines begin to accumulate.

Freelancer-led MVPs work best for simple products or early experiments. As complexity increases, the lack of centralized accountability introduces execution risk that often offsets the initial cost advantage.

Outsourcing MVP Development Cost and Benefits

Outsourcing to an agency provides a structured delivery model with clearly defined processes. Instead of managing individual contributors, product teams work with a single unit responsible for execution, quality, and delivery timelines.

This model reduces coordination overhead and improves predictability. Agencies typically operate with established workflows for planning, development, and testing, which helps control scope and maintain consistency across the product.

The key differentiator is methodology. Agencies with a defined MVP approach, including structured discovery, sprint planning, and validation checkpoints, deliver significantly more reliable outcomes than those operating without a standardized process.

Hybrid MVP Development Model

The hybrid model combines external execution with internal oversight. An external team handles development, while an internal technical lead manages priorities, architecture decisions, and quality control.

This approach balances flexibility with control. It allows startups to move quickly without committing to full in-house costs while still retaining ownership over critical technical decisions.

Hybrid models are most effective when responsibilities are clearly defined. Without clear ownership of decision-making and review processes, coordination gaps can emerge, leading to delays and cost overruns.

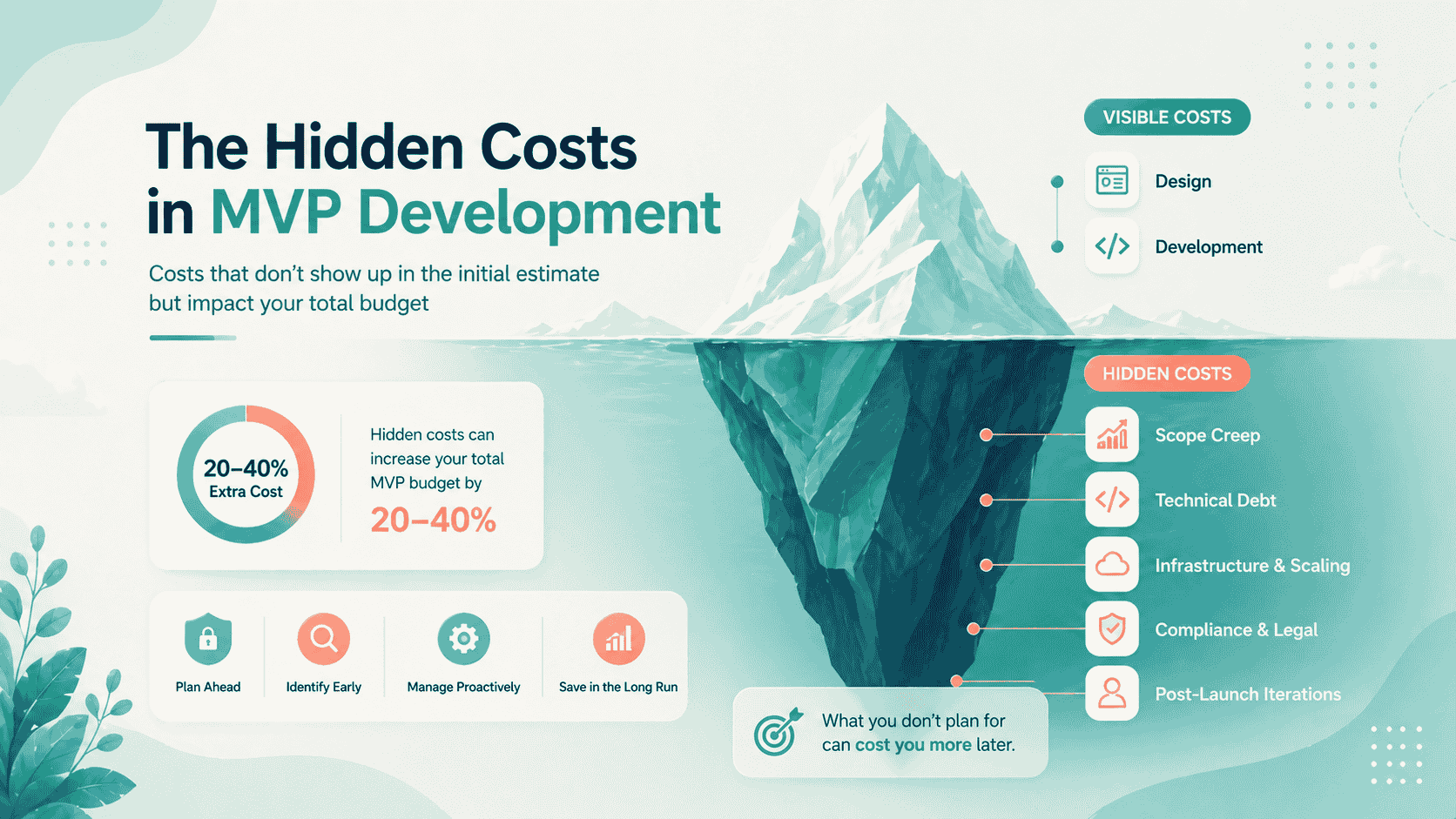

Hidden MVP Development Costs Most Startups Ignore

The cost of building an MVP rarely ends at development. Initial estimates typically capture visible effort such as design, engineering, and testing, but a significant portion of total investment emerges through cost drivers that appear later in the product lifecycle.

These costs are not unexpected, they are simply misaligned with when teams plan for them. As a result, they show up as overruns rather than predictable extensions of the MVP budget.

How Scope Creep Increases MVP Development Cost During Execution

Scope creep is one of the most immediate and consistent drivers of budget expansion in MVP development. It rarely originates from a single major decision. Instead, it accumulates through small feature additions, refinements, and adjustments that individually appear reasonable.

Over time, these incremental changes reshape the product beyond its original scope, increasing both development effort and delivery timelines. In practice, this can expand total cost significantly, often in the range of 20-40% beyond the initial estimate.

The only effective control mechanism is structural. A clearly defined scope, combined with a formal change-request process, ensures that every addition is evaluated against its cost and timeline impact before implementation.

Post-Launch Infrastructure Costs in MVP Development (Scaling Challenges)

Infrastructure decisions made during MVP development are typically optimized for speed and minimal upfront cost. While this supports rapid validation, it does not account for growth.

As usage increases, infrastructure costs scale across multiple dimensions simultaneously, including compute, storage, database operations, and network usage. This growth is rarely linear and can introduce unexpected cost spikes if not anticipated.

Designing infrastructure with scalability in mind from the beginning reduces the need for expensive migrations and reactive fixes. Cost efficiency at scale is largely determined by architectural decisions made during the MVP stage.

Legal and Compliance Costs in MVP Development You Must Plan For

Legal and compliance requirements are often excluded from early MVP budgets, particularly in fast-moving startup environments. However, these costs become unavoidable once the product begins handling user data or enters regulated markets.

Even at a basic level, products require terms of service, privacy policies, and data handling frameworks. In regulated industries, these requirements expand into structured compliance processes that introduce both implementation and operational costs.

Delaying compliance does not eliminate cost, it shifts it. Retrofitting compliance into an existing system is consistently more expensive than integrating it into the product architecture from the outset.

Technical Debt in MVP Development and Its Long-Term Cost Impact

Technical debt is the cost of decisions made to accelerate development at the expense of long-term maintainability. In MVP contexts, some level of technical debt is intentional and can be justified to reduce time-to-market.

The risk emerges when this debt is not tracked or managed. Poor architectural decisions, lack of documentation, and inconsistent implementation standards increase the effort required to scale or modify the product.

Refactoring a system that was not designed for growth can require a substantial portion of the original development investment. Treating technical debt as a managed tradeoff, rather than an unintended consequence, allows teams to control this cost more effectively.

Post-Launch MVP Iteration Costs and Ongoing Development Budget

Launching an MVP is the starting point of the feedback cycle, not the end of development. User behavior, feedback, and performance data generate a continuous stream of improvements that must be implemented to move toward product-market fit.

This iteration process requires sustained engineering effort. Without a dedicated budget, teams struggle to respond to user needs, slowing down product evolution and limiting the value of the MVP itself.

A practical approach is to allocate a portion of the initial development budget, often in the range of 20–30%, specifically for post-launch iteration. This ensures the product remains adaptable and capable of evolving based on real user insights.

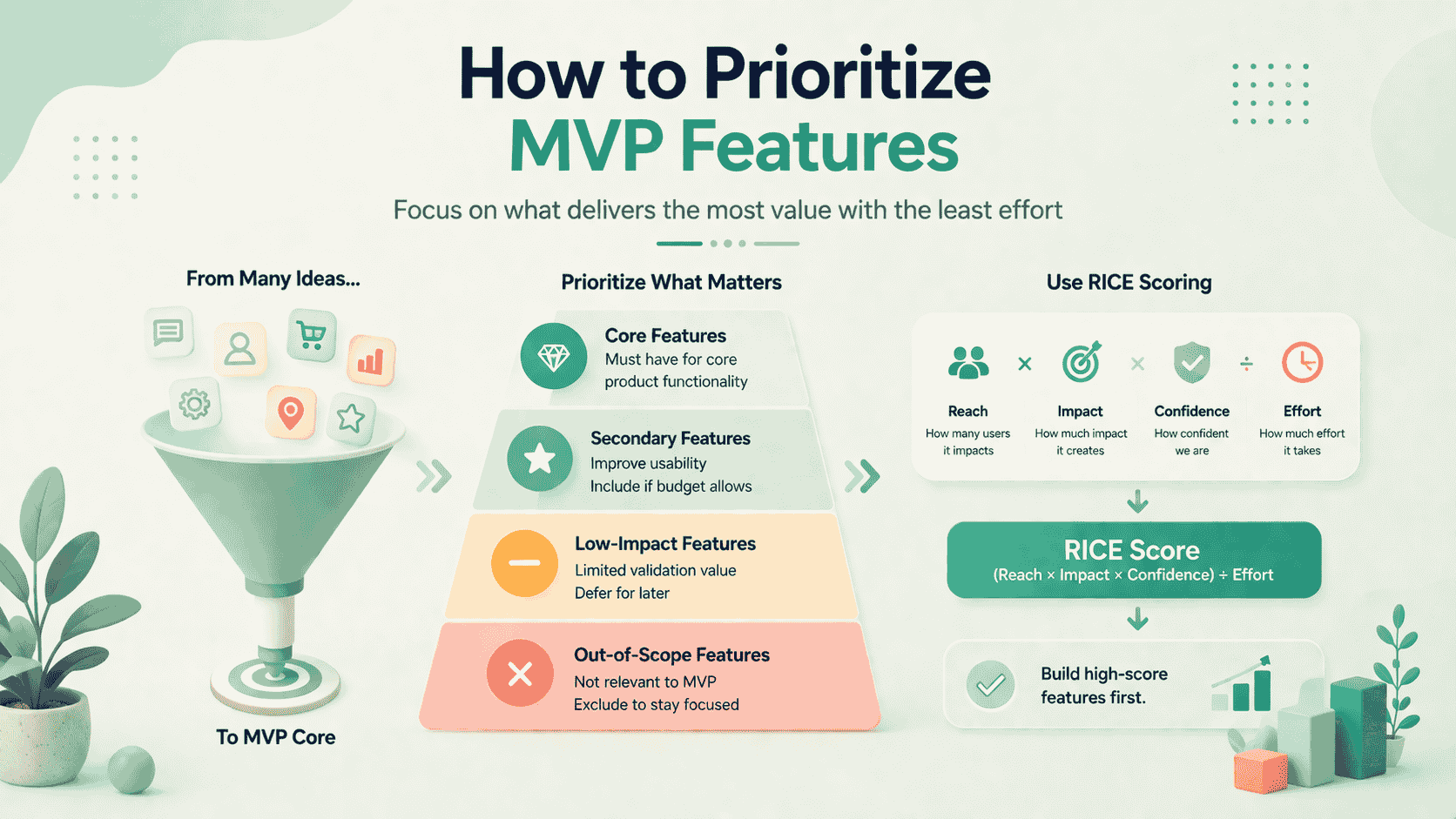

How to Prioritize MVP Features to Maximize Value and Reduce Cost

Feature prioritization is the highest-leverage decision in MVP development. Budget overruns are rarely caused by engineering inefficiency but by allocating resources to features that do not contribute to validating the core product hypothesis.

The objective is not to minimize features, but to ensure that every feature included in the MVP contributes directly to measurable learning.

Applying the MoSCoW Framework for Structured MVP Scope Control

Instead of treating MoSCoW as a classification exercise, it should be used as a decision filter for what enters the MVP scope.

MVP Feature Prioritization Framework (MoSCoW Applied)

This structure shifts the focus from labeling features to making trade-offs explicit.

Most MVP cost overruns originate from secondary features being treated as essential. These additions delay validation while increasing development effort without proportional insight.

Defining exclusion boundaries is equally critical. When features are not explicitly marked as out-of-scope, they tend to re-enter development through incremental decisions.

Using RICE Scoring to Prioritize High-Impact, Cost-Efficient Features

RICE scoring introduces a quantitative layer to prioritization by evaluating features based on expected value relative to implementation effort.

RICE = (Reach × Impact × Confidence) ÷ EffortThis model ensures that features are not prioritized based on perceived importance alone, but on measurable contribution to product validation.

- Reach evaluates how many users the feature affects

- Impact measures its influence on key metrics

- Confidence reflects certainty in estimates

- Effort represents development cost in time

From a cost perspective, RICE helps identify features that deliver the highest value per unit of engineering effort. This prevents overinvestment in complex features that may not generate proportional returns at the MVP stage.

A Practical Decision Framework for MVP Feature Selection

Frameworks are only effective when they translate into execution decisions. In MVP development, prioritization should follow a clear rule set:

- Include features that directly validate the core product hypothesis

- Defer features that improve experience without contributing to validation

- Exclude features intended for future scale or non-core user segments

This approach ensures that development effort is aligned with learning objectives rather than feature expansion.

How to Reduce MVP Development Costs Without Compromising Quality

Cost reduction in MVP development is not about finding cheaper developers it is about eliminating waste in scope, process, and tooling.

Use Cross-Platform Frameworks for Mobile Builds

Cross-platform development using Flutter or React Native reduces mobile MVP cost by 30 to 40% compared to building separate native iOS and Android applications. A single codebase, a single QA cycle, and a single deployment pipeline replace what would otherwise be two parallel workstreams.

For most MVPs, the performance ceiling of cross-platform tools is entirely sufficient. Native development should only be selected when hardware integration, platform-specific APIs, or App Store optimization are validated requirements, not assumptions.

Prioritize Open-Source Infrastructure

Open-source tooling eliminates licensing costs across the infrastructure stack. PostgreSQL replaces paid relational databases. Redis handles caching and session management at no licensing cost. Supabase provides a Firebase-equivalent backend. Prometheus and Grafana cover observability.

The discipline is asking not "what tool solves this problem?" but "what open-source tool solves this problem, and what is the real cost of managing it?" Open-source tooling reduces licensing spend but introduces maintenance responsibility, a trade that is almost always favorable at MVP scale.

Apply Agile Methodology with Fixed Sprint Scope

Agile delivery with locked sprint scope prevents the most common source of MVP cost overruns: continuous scope expansion during development. Agile delivery models, particularly Scrum and Kanban, structure development into fixed, reviewable cycles where scope, cost, and output are tightly controlled.

Teams that operate without sprint boundaries approving "quick additions" informally throughout development consistently finish over budget. The overhead of a structured sprint process is far smaller than the cost of the scope creep it prevents.

Optimize Cloud Costs from Day One

Infrastructure cost control begins at architecture selection, not billing review. Cloud providers such as Amazon Web Services, Microsoft Azure, and Google Cloud Platform offer highly scalable infrastructure, but without cost governance, usage can expand rapidly beyond initial estimates.

Cloud infrastructure consistently ranks among the top recurring cost drivers for scaling digital products, particularly in early growth stages where usage patterns are unpredictable.

Decisions made during MVP infrastructure design without FinOps discipline regularly produce bills that exceed initial projections by 2-3× at the growth stage.

Leverage AI-Assisted Development Tools

AI-assisted development tools like GitHub Copilot, Cursor, and similar code generation frameworks reduce boilerplate engineering time by 10 to 20% for experienced teams that have integrated them effectively into their workflow. This translates directly to fewer billable hours on standard implementation tasks.

AI tooling accelerates repetitive code patterns, not architecture decisions, system design, or QA judgment. Teams that use these tools for what they do well code completion, test generation, and documentation drafting realize the efficiency gains without compromising quality on the decisions that require human expertise.

MVP Development Cost Trends in 2026

The cost environment for MVP development in 2026 is shaped by three converging forces: AI integration demands, compliance maturation, and infrastructure cost normalization.

AI Features Are Raising and Lowering Costs Simultaneously

Products that integrate AI capabilities, recommendation engines, natural language interfaces, and predictive features carry an AI integration premium of 15 to 30% above baseline development cost. Model API costs, fine-tuning infrastructure, and AI-specific QA cycles add scope that did not exist in prior MVP budgets.

Simultaneously, AI-assisted development tooling is reducing engineering time for standard development tasks by 10 to 20% for teams that have adopted these tools effectively. The net effect is cost-neutral to slightly positive for non-AI products and cost-additive for AI-native ones.

Compliance Costs Are Rising Across Verticals

Regulatory frameworks for data privacy, AI transparency, and digital product accountability are maturing globally. Products operating in the EU, healthcare, FinTech, or enterprise SaaS verticals face compliance costs in 2026 that were either absent or voluntary in 2022 to 2024.

GDPR enforcement, the EU AI Act, and sector-specific data sovereignty requirements are moving from advisory to mandatory, with enforcement actions that make non-compliance financially material. MVP budgets in regulated verticals now routinely include $10,000 to $40,000 in compliance-specific development scope.

FinOps and Observability Are Becoming Standard

The practice of FinOps active cloud cost governance is now a standard operational expectation for investor-backed products. MVPs that launch without cost monitoring and alerting infrastructure generate unpredictable infrastructure bills that create financial uncertainty at the worst possible time.

Observability tooling, distributed tracing, error tracking, and performance monitoring have similarly moved from a post-launch concern to an MVP-stage requirement in engineering teams with experienced technical leadership. These tools add $2,000 to $8,000 to MVP infrastructure cost but prevent the reactive incident response cycles that cost significantly more.

Conclusion

An MVP is a capital-efficient instrument for collecting the evidence required to make the next product decision with confidence. The cost of building one correctly scoped to the hypothesis, delivered by a structured team, with realistic post-launch planning, is not the number most founders start with.

It is the number reached after systematically accounting for every variable covered in this guide. Strategic MVP budgeting does not minimize cost. It allocates cost deliberately toward evidence generation, technical sustainability, and the iteration capacity required to act on what users tell you.

Founders who treat MVP investment as a validation budget rather than a development expense to be minimized consistently make better product decisions faster.