What Is Vendor Lock-In in Cloud Computing and How to Break Free

What is Vendor Lock-In?

Vendor lock-in in cloud computing occurs when an organization becomes highly dependent on a single cloud provider’s ecosystem, making it difficult, costly, or impractical to switch to another provider.

This dependency can develop across multiple layers, including technology, operations, and cost structures.

Applications often rely on tightly integrated, provider-specific services such as proprietary databases, messaging systems, serverless platforms, machine learning tools, and monitoring solutions.

While these services accelerate development, they also create coupling with the provider’s architecture.

I have tried to elaborate on what vendor lock-in means in cloud computing, why it happens, and how organizations can reduce or avoid it through thoughtful architecture and planning.

What Causes Vendor Lock-In ?

Unlike a typical technical inconvenience, vendor lock-in is often the result of consistent patterns relying heavily on proprietary APIs, cloud-specific services, or tightly coupled tooling without fully accounting for long-term portability.

Most organizations including ours have integrated third-party services extensively, especially over the last few years.

What often begins as a practical way to move faster using managed databases, serverless functions, or platform-native tooling can slowly turn into deep dependency.

At this point, it’s not always about necessity. It’s also shaped by ecosystem maturity, developer convenience, where adopting vendor-specific solutions becomes the default rather than a consciously evaluated trade-off.

Several common factors contribute to this situation.

Use of Proprietary Cloud Services

Many cloud platforms offer services that exist only within their ecosystem.

Examples include proprietary database engines, event processing frameworks, serverless runtimes, and hybrid architectures that incorporate vendor-specific components.

These tools can improve development speed because they remove infrastructure management tasks. Engineers can focus on application logic instead of maintaining servers.

The challenge appears later. Applications written around provider-specific services cannot easily run elsewhere. Migrating them often requires replacing those services with alternatives supported by other platforms.

Deep Integration With Platform Ecosystems

Cloud providers design their ecosystems to integrate closely with each other. Identity systems connect with monitoring services, networking tools integrate with compute platforms, and storage systems integrate with analytics engines.

This integration improves operational efficiency. Teams can build complete systems without assembling many independent components.

However, the deeper an application integrates with one ecosystem, the harder it becomes to detach from it.

Data Migration Challenges

Data represents one of the most significant barriers to switching cloud providers.

Large organizations store petabytes of information in cloud storage systems or managed databases. Moving this data to another platform can require significant time and network bandwidth.

Cloud providers sometimes charge data egress fees when information leaves their infrastructure. These costs can grow quickly for large datasets.

Migration complexity increases when data formats or storage structures depend on provider-specific features.

Operational and Infrastructure Dependencies

Infrastructure configuration often becomes tightly coupled to a provider’s environment. Developers define automation scripts, networking rules, and deployment pipelines based on that platform’s architecture.

For instance, a company may rely on a provider’s identity management system, network security policies, or monitoring framework.

Over time, these dependencies accumulate, making the system increasingly aligned with a single ecosystem.

Rebuilding them on another platform isn’t just a migration task it requires rethinking and reengineering core infrastructure components.

Operational familiarity reinforces this further. Teams build expertise around specific tools and workflows tied to one provider, which makes shifting away not only technically complex but also operationally disruptive.

Types of Vendor Lock-In in Cloud Environments

Vendor lock-in does not occur in a single form. It can affect multiple layers of a cloud architecture.

Infrastructure Lock-In

Infrastructure lock-in happens when applications rely on provider-specific compute, storage, or networking systems.

For example, a company might build infrastructure automation using templates designed specifically for one cloud provider’s environment. If the organization attempts to move to another platform, these templates must be rewritten.

Networking configurations can also introduce infrastructure dependency, especially when applications rely on proprietary load balancing systems or networking constructs.

Platform Lock-In

Platform lock-in occurs when applications depend on managed services that exist only within a particular cloud ecosystem.

Instead of building with portable, standardized components, teams rely on tightly integrated offerings such as proprietary serverless platforms, provider-specific database engines, and specialized machine learning services.

While these services significantly accelerate development and reduce operational overhead, they often do not have direct equivalents in other cloud environments.

As a result, applications become closely coupled with the provider’s platform.

When organizations attempt to migrate, this dependency creates substantial challenges.

Entire components may need to be redesigned or replaced to work with alternative services, increasing both the complexity and cost of migration.

Data Lock-In

Data lock-in occurs when large datasets reside in systems that are difficult to export or migrate.

This situation can arise when data is stored in proprietary database formats or integrated with provider-specific analytics services.

The challenge grows as datasets increase in size. Moving large volumes of data across networks can take days or weeks.

Tooling and Workflow Lock-In

Development tools and operational workflows can also create strong dependency on a specific cloud provider.

Organizations often adopt provider-specific solutions for application deployment, monitoring and logging, infrastructure automation, and identity management, as these tools integrate seamlessly within the provider’s ecosystem and simplify day-to-day operations.

Over time, development pipelines, CI/CD processes, and operational practices become tightly aligned with these tools.

This deep integration makes switching providers more complex, as teams must rebuild or significantly modify their workflows to align with a new environment.

The effort required to reconfigure tooling and retrain teams can become a major barrier to migration.

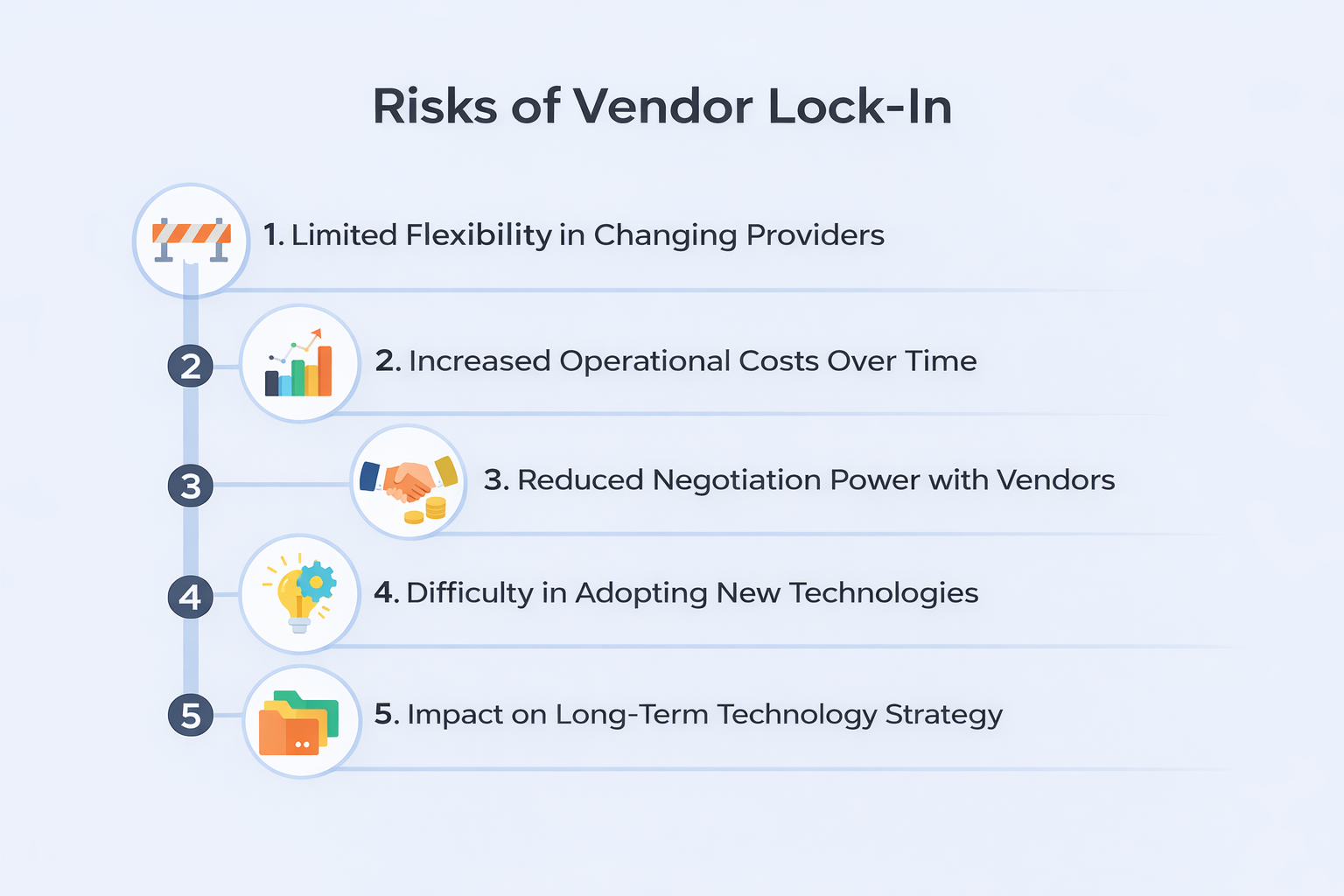

What are the Pitfalls of Vendor Lock-In?

Vendor lock-in introduces a range of operational risks for organizations that depend heavily on a single cloud provider.

These risks can directly impact flexibility, cost efficiency, and long-term technology decisions.

Limited Flexibility in Changing Providers

One of the most significant risks is reduced flexibility. When systems are deeply integrated with a specific cloud platform, migrating workloads to another provider becomes complex and time-consuming.

If the vendor changes pricing models, service availability, or policies, organizations may struggle to adapt quickly due to high switching barriers.

Increased Operational Costs Over Time

Vendor lock-in can lead to rising operational costs. Without the ability to easily move to alternative providers, organizations may be forced to accept higher pricing or less optimal service terms.

Additionally, the engineering effort required to redesign or migrate systems can be substantial, further increasing costs.

Reduced Negotiation Power with Vendors

When an organization is heavily dependent on a single provider, its bargaining position weakens. Vendors are less likely to offer competitive pricing or favorable terms because they know switching providers would involve significant effort and risk for the customer.

Difficulty in Adopting New Technologies

Cloud providers frequently release new tools, services, and innovations. However, organizations locked into a single ecosystem may find it difficult to adopt better or more suitable technologies offered by other platforms.

This limitation can slow innovation and prevent teams from leveraging best-in-class solutions.

Impact on Long-Term Technology Strategy

Vendor lock-in can constrain long-term planning. Organizations that do not prioritize portability and interoperability during system design may face challenges when scaling, modernizing, or shifting infrastructure strategies.

Over time, this can limit growth, reduce competitiveness, and make it harder to respond to evolving business needs.

Vendor lock-in is not just a technical limitation it’s a strategic risk that can restrict growth, increase costs, and limit innovation over time. Organizations that prioritize flexibility and portability early are better positioned to adapt and stay competitive in a rapidly evolving cloud landscape.

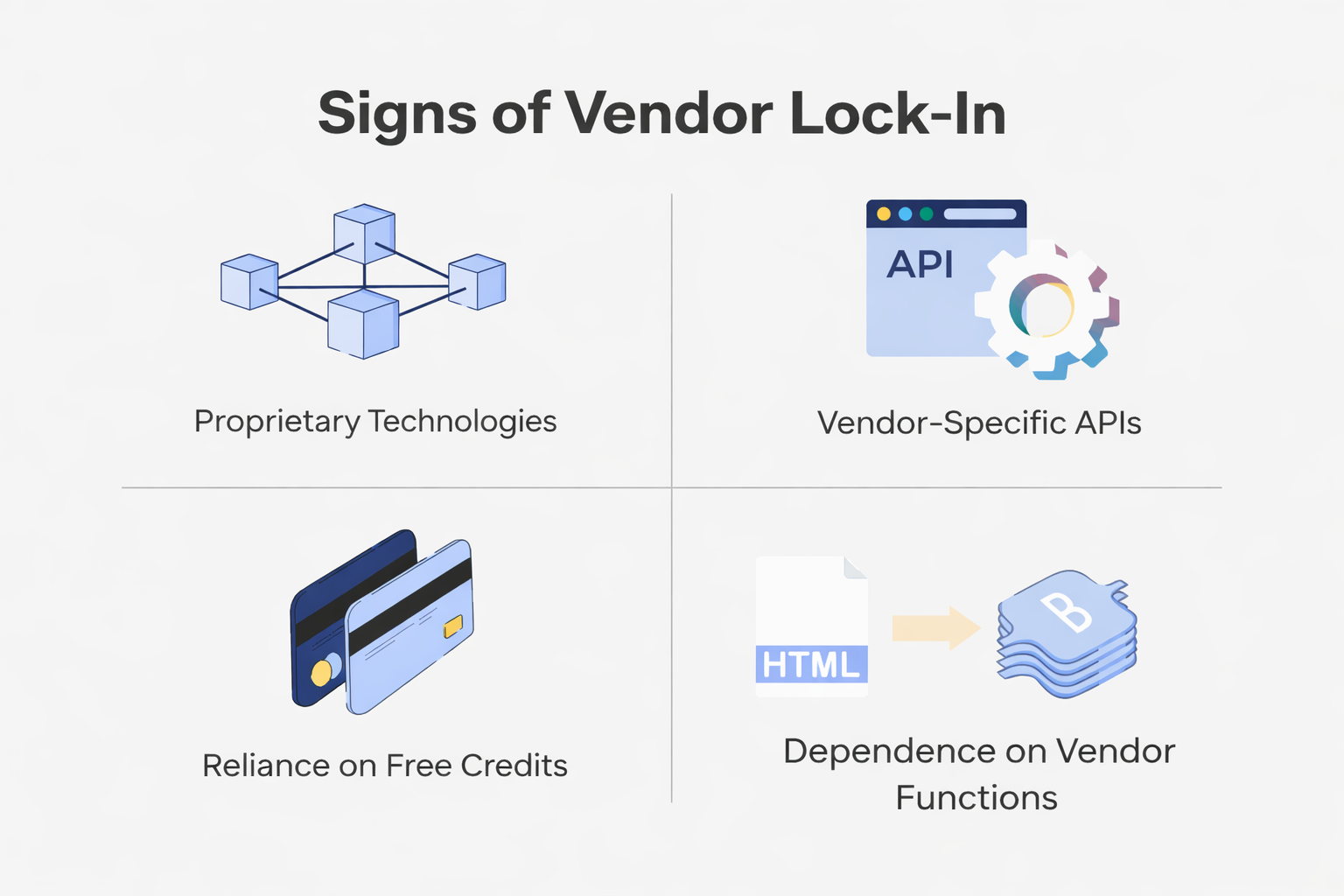

Signs That an Organization Is Experiencing Vendor Lock-In

Recognizing vendor lock-in early is critical. Many organizations only become aware of the issue when migration becomes necessary but by then, the cost and complexity are already high. The following indicators help identify increasing dependence on a single cloud provider:

Heavy reliance on provider-specific APIs

When applications are deeply integrated with proprietary APIs, replacing or migrating those components becomes difficult. These APIs often lack direct equivalents in other environments, forcing teams to rewrite significant portions of the codebase during migration.

Applications tightly integrated with proprietary services

Using managed services such as provider-specific databases, serverless platforms, or AI tools can create strong coupling. The more core business logic depends on these services, the harder it becomes to replicate functionality elsewhere.

Complex and costly migration processes

If moving even a small workload requires extensive planning, re-architecture, or downtime, it is a clear signal of lock-in. High data transfer costs, incompatible service models, and a lack of standardization all contribute to this complexity.

Lack of portability across environments

Applications that cannot run consistently across different cloud providers or on-premise systems indicate poor portability. This often results from tightly coupled infrastructure configurations, non-standard tooling, or hardcoded dependencies.

These signs collectively highlight a growing dependency on a single platform. As reliance increases, flexibility decreases making it harder for organizations to adapt to pricing changes, adopt new technologies, or meet evolving business requirements.

Early identification allows teams to take corrective action before lock-in becomes a critical constraint.

Strategies to Avoid Vendor Lock-In

Avoiding vendor lock-in starts with deliberate architectural planning. Organizations that prioritize portability and flexibility early in the development lifecycle can significantly reduce the complexity and cost of future migrations.

Designing Cloud-Agnostic Architectures

A cloud-agnostic approach focuses on building systems that can operate across multiple cloud providers. Instead of tightly coupling applications to a single platform, teams use portable services and abstraction layers, making it easier to shift workloads when needed.

Using Open Standards and Widely Supported Technologies

Adopting open standards and widely supported frameworks improves interoperability between environments. Technologies such as open-source databases, containerization platforms, and message brokers can run consistently across different cloud providers, reducing dependency on any one ecosystem.

Avoiding Unnecessary Dependence on Proprietary Services

While proprietary services can offer powerful features, over-reliance on them increases switching complexity. Organizations should evaluate whether these services provide enough strategic value to justify the potential lock-in, and avoid them when equivalent portable alternatives exist.

Maintaining Infrastructure Portability

Infrastructure portability ensures that deployment processes remain consistent across platforms. Using infrastructure-as-code tools that support multiple cloud providers allows teams to replicate environments easily and migrate workloads with minimal disruption.

Planning Early to Reduce Migration Challenges

Incorporating portability considerations during the design phase helps avoid costly rework later. Early planning enables better architectural decisions, reduces technical debt, and ensures that systems remain adaptable as business and technology requirements evolve.

Avoiding vendor lock-in is ultimately about making intentional design choices that balance innovation with flexibility. Organizations that invest in portability early gain greater control over their infrastructure and are better prepared to adapt to changing technologies and market conditions.

Using Containers to Improve Portability

Containerization has emerged as one of the most effective strategies for improving application portability across cloud environments. By standardizing how applications are packaged and deployed, containers enable consistent execution regardless of the underlying infrastructure.

Containerization Technologies

Technologies like Docker and Kubernetes play a central role in modern application deployment. Docker is used to create lightweight, portable containers, while Kubernetes provides orchestration capabilities to manage, scale, and deploy these containers across different environments.

Packaging Applications with Dependencies

Containers bundle applications along with their runtime dependencies, libraries, and configuration settings. This eliminates inconsistencies between development, testing, and production environments, ensuring that applications behave the same way everywhere they run.

Running Containers Across Multiple Environments

Containers can run seamlessly across public clouds, private clouds, and on-premise infrastructure. This flexibility allows organizations to move workloads between providers without needing to redesign the application or its environment.

Simplifying Application Migration

Without containers, applications tied to provider-specific virtual machines require significant effort to migrate, including recreating environments and configurations.

With containers orchestrated by Kubernetes, the same container images can be deployed across different platforms with minimal changes, significantly reducing migration complexity.

Reducing Dependency on Provider-Specific Infrastructure

Container orchestration platforms abstract the underlying infrastructure, minimizing reliance on provider-specific compute services. This abstraction allows teams to maintain greater control over their deployment strategy and avoid deep vendor dependencies.

Containerization provides a practical and scalable way to achieve true application portability. By decoupling applications from infrastructure, organizations can move faster, reduce risk, and maintain flexibility across evolving cloud environments.

Multi-Cloud and Hybrid Cloud Strategies

To reduce long-term dependency on a single provider, organizations often adopt architectural strategies that prioritize flexibility and control. Two commonly used approaches are multi-cloud and hybrid cloud.

What is Multi Cloud Strategy?

It refers to using services from multiple cloud providers within the same architecture. Workloads are distributed across platforms to reduce dependency on any single provider and to choose services based on specific needs.

What is Hybrid Cloud Strategy?

It refers to combining on-premise infrastructure with cloud environments. Certain workloads or data remain in-house, while others run in the cloud, allowing organizations to maintain control over critical systems.

Data Portability and Migration Planning

Data management is a critical factor in avoiding vendor lock-in. Organizations that treat data portability as a core design requirement can significantly reduce the complexity and risk associated with moving between cloud environments.

Using Standardized Data Formats

Storing data in standardized and widely supported formats improves interoperability across platforms. Open formats make it easier to transfer, process, and reuse data without being tightly coupled to a specific provider’s ecosystem.

Maintaining Reliable Backup Strategies

Regular backups are essential for ensuring data safety and portability. Storing backups outside the primary cloud provider creates an additional layer of protection and enables smoother recovery or migration if needed.

Planning Data Migration Processes Early

Early migration planning helps organizations understand the practical challenges involved in moving data. This includes estimating transfer times, evaluating network bandwidth requirements, and accounting for potential costs associated with large-scale data movement.

Reducing Migration Barriers Through Better Data Management

When data is structured, portable, and well-managed, it becomes significantly easier to migrate systems between environments. Proper data practices minimize downtime, reduce risk, and eliminate major technical obstacles during transitions.

Data portability is not just a technical consideration—it is a strategic necessity for long-term flexibility. Organizations that proactively manage and plan their data are better equipped to adapt, scale, and transition across cloud platforms without disruption.

Design Principles for Cloud-Neutral Architectures

Cloud-neutral architecture focuses on decoupling application logic from provider-specific infrastructure.

By reducing direct dependencies on any single cloud platform, organizations can improve flexibility and make it easier to move workloads as requirements evolve.

Utilise Abstraction Layers

One of the most effective techniques is implementing abstraction layers between applications and infrastructure services. Instead of directly interacting with provider-specific APIs, applications communicate with intermediate layers that can integrate with multiple cloud providers, enabling easier switching and reduced dependency.

Leverage API-Driven Integrations

API-driven design promotes the use of standardized interfaces for communication between components. By relying on consistent APIs rather than platform-specific integrations, applications become more portable and can operate across different environments with minimal changes.

Build Loosely Coupled Service Architectures

Loosely coupled systems ensure that individual components interact through well-defined interfaces. This design allows services to be developed, deployed, or migrated independently without impacting the entire system, improving both flexibility and resilience.

Use Portable Infrastructure Automation Tools

Infrastructure-as-code tools like Terraform enable teams to define and manage infrastructure across multiple cloud providers using consistent configuration patterns. This approach simplifies deployment and ensures that environments can be replicated or migrated with minimal effort.

Improve Portability Through Design Choices

By combining abstraction, standardized communication, and automation, organizations can create systems that are not tightly bound to a single provider.

These architectural choices make it easier to adapt to new technologies, scale across environments, and respond to changing business needs.

Designing cloud-neutral architectures requires upfront effort, but it pays off in long-term flexibility and reduced risk. Organizations that adopt these practices gain greater control over their infrastructure and can evolve their systems without being constrained by vendor-specific limitations.

In practice, building a cloud setup that remains flexible over time whether developed internally or with external support requires careful consideration. The right approach isn’t always obvious, especially when decisions involve trade-offs between speed, control, and long-term dependency.

For teams without a deep technical background, evaluating these choices can become even more challenging. In such cases, getting input from experienced professionals can help in understanding what aligns best with your requirements.

To make this process more approachable, we’ve put together a curated list of reliable cloud service providers. The list includes companies that offer both consulting and implementation support, helping you evaluate options and move forward with greater clarity.

Challenges When Breaking Vendor Lock-In

Breaking vendor lock-in is often complex and resource-intensive, especially when systems are deeply integrated with a specific cloud provider. Organizations must approach this process with realistic expectations around time, cost, and operational impact.

High Migration Costs

Migration projects require significant investment in engineering effort, infrastructure changes, and testing. These costs can quickly escalate, particularly for large-scale systems, making it difficult to justify migration without clear long-term benefits.

Application Refactoring Requirements

Applications built using proprietary cloud services often need to be redesigned or refactored. Replacing provider-specific components with portable alternatives can be time-consuming and may introduce additional complexity into the system.

Data Transfer Complexity

Transferring large volumes of data between environments is a major challenge. Organizations must consider bandwidth limitations, transfer costs, data integrity, and potential downtime, all of which require careful planning and execution.

Operational and Skillset Changes

Switching platforms often requires teams to adopt new tools, workflows, and infrastructure practices. This may involve training, process adjustments, and temporary productivity slowdowns as teams adapt to new environments.

Balancing Business and Engineering Priorities

Migration efforts consume valuable time and resources that could otherwise be allocated to product development or innovation. Organizations must carefully balance the need to reduce lock-in with ongoing business objectives.

The Need for Long-Term Planning

Because of these challenges, addressing vendor lock-in should not be treated as a reactive measure. Incorporating portability and flexibility into system design from the beginning helps avoid costly and complex migrations later.

Breaking vendor lock-in is rarely quick or easy it requires strategic planning, technical effort, and organizational alignment. Companies that proactively address these risks early are far better positioned to adapt without disruption when change becomes necessary.

Summing Up

Vendor lock-in occurs when applications, data, and operational workflows become tightly dependent on a specific cloud provider.

This dependence can limit flexibility, increase operational costs, and complicate technology adoption in the future.

Organizations can reduce these risks through careful architecture decisions. Portable infrastructure, containerized applications, cloud-neutral design patterns, and multi-cloud strategies help maintain flexibility across environments.

Planning for portability early allows companies to benefit from cloud computing while maintaining control over their technology direction.