How to Utilise Denial Management Automation in Healthcare Apps

Claim denials are no longer a billing department inconvenience. They are a strategic financial threat and the numbers have been quietly screaming that for years.

Before getting into what denial automation is or how it works, it's worth understanding the scale of what's actually happening on the ground.

11.8% Initial denial rate in 2024, up from 10.2% just a few years prior.

41% of U.S. providers now reporting that at least 1 in 10 claims gets denied.

$57.23 Administrative cost per denied claim in 2023 up from $43.84 in 2022

4.8% Average net revenue lost to denials per hospital system annually.

Let's put that 4.8% figure in context. For a hospital system generating $500 million in annual patient revenue, that's $24 million walking out the door, not from bad patient outcomes or operational failure, but from paperwork.

From a code entered wrong, a prior authorization missed by 24 hours, or a payer's AI bot silently rejecting a claim it was algorithmically trained to deny.

The Advisory Board estimates that data-driven denial prevention alone can recover up to $10 million per $1 billion in patient revenue , money that most hospitals are currently writing off.

Here's what makes this particularly frustrating,

More than half of appealed denials are ultimately reversed. That means a substantial portion of rejections from payers are not clinically justified , they're administrative, procedural, or the result of payer-side AI making the math work in the insurer's favor.

Payers have been running automated denial engines for years. Insurers leveraged AI to reject claims at scale long before providers even began budgeting for the countermeasure.

"We've been invaded. Payers have been using this technology for years to fight against hospitals and increase our denials. Now, healthcare systems are getting smarter."

- Sheldon Pink, FHFMA, Former VP Revenue Cycle, Luminis Health

What Do We Actually Mean by a Denial Automation System?

The term "denial automation" is used loosely in healthcare circles ,sometimes to mean a bot that checks claim status, sometimes to mean a full predictive AI engine.

Your intent will have to clear, because the technology you need depends entirely on where your revenue cycle is bleeding.

At its most fundamental level, denial automation in healthcare is the use of software , ranging from rule-based bots to machine learning models , to systematically prevent, detect, categorize, route, appeal, and learn from insurance claim denials, with minimal or zero manual intervention at each stage.

It is not a single product. It is a capability layer that can live across your entire revenue cycle from the moment a patient walks in the door (or schedules an appointment) to the point a payer issues an EOB.

Technology Tech Stack for Denial Automation

Layer 1: Robotic Process Automation (RPA)

RPA bots mimic the mouse-and-keyboard actions of a human billing specialist logging into payer portals, pulling claim status, entering patient demographic data, submitting prior authorization requests, retrieving EOBs at machine speed and with no fatigue.

RPA is rules-based, if the claim has condition X, perform action Y. It doesn't learn; it executes.

This is the entry-level layer, and it alone can handle 60–80% of the repetitive transactional work in a typical billing workflow.

Layer 2: Predictive Analytics and Machine Learning

This is where the system starts to "think." ML models trained on historical claims data can score each outgoing claim for denial probability before it's submitted.

They identify tendencies that have historically resulted in rejection for a specific CPT code, a payer, and a patient profile in this DRG.

The model flags the claim so a human can correct it before it ever leaves the hospital.

Layer 3: Natural Language Processing and Generative AI

NLP systems can read an Explanation of Benefits (EOB) or a denial letter , unstructured text also extract the denial reason code, while cross-referencing it against clinical documentation, and automatically draft an appeal.

Mayo Clinic, as documented by HFMA, now operates a bot that writes appeal letters. This is generative AI applied directly to recovery workflows a capability that would have required a senior billing specialist's time just three years ago.

Key Distinction

Denial automation is not the same as clearinghouse claim scrubbing. A clearinghouse catches format errors before submission.

Denial automation operates across the entire lifecycle:

- Pre-service eligibility

- Pre-submission validation

- Post-denial routing

- Appeal generation

- Pattern-based prevention for future claims.

How Denial Automation Works Inside Real Revenue Cycle Workflows

Let's trace a claim from the moment a patient schedules an appointment to the moment payment posts and show exactly where automation intervenes at each stage.

Pre-Service

(Eligibility and Authorization Verification)

Before the patient even arrives, an RPA bot fires against the payer's portal or the 270/271 HIPAA transaction to verify active coverage, check for coordination of benefits issues, flag whether a referral is required, and determine whether the planned procedure needs prior authorization.

Historically this was done by front-desk staff who checked two, maybe three fields.

The bot checks everything, every time, and flags incomplete data for human correction before the visit.

This is where the most preventable denials are stopped.

Pre-Submission

(Claim Scrubbing and Denial Probability Scoring)

After the encounter, the coding and billing team generates a claim.

Before submission, the automation layer runs the claim through:

a) Payer-specific rules validation ,checking that the CPT/ICD-10 combination is valid under that payer's current policy,

b) A predictive ML model that scores denial risk based on historical patterns for this payer–procedure–patient combination,

c) Documentation completeness checks, ensuring that medical necessity documentation is attached where required.

Claims above a risk threshold are routed to a human reviewer. Clean claims go straight to submission.

Submission and Status Tracking

RPA bots handle submission to payers (or clearinghouses), then continuously monitor the status of outstanding claims on payer portals without waiting for a human to log in and check.

Aged claims that haven't received a determination within a defined SLA trigger automated follow-up actions:

- Bot-generated status inquiries

- Escalation to a human queue if response time exceeds threshold

- Automatic logging of payer response times (which feeds the payer scorecard analytics downstream).

Denial Receipt and Categorization

When a denial arrives, the automation system reads the CARC (Claim Adjustment Reason Code) and RARC (Remittance Advice Remark Code) from the ERA (Electronic Remittance Advice).

It classifies the denial by type,

- Hard denial (claim closed, rebilling required)

- Soft denial (additional information requested, appeal window open)

- Clinical denial (medical necessity)

- Administrative denial (missing auth, wrong code)and routes it to the appropriate work queue, with the payer's appeal deadline already calculated and flagged.

Staff no longer manually read and sort denial paperwork.

Appeal Generation and Submission

For clinical and administrative denials, the system generates a draft appeal letter using NLP, pulling from the clinical notes in the EHR, the payer's published medical policy, the applicable coverage determination, and templates tuned to each payer's appeal requirements.

A clinician or billing specialist reviews the draft, makes amendments, and approves it.

The bot then submits it through the correct channel (portal, fax, certified mail) before the deadline. Appeal deadline tracking , one of the most common failure points in manual denial management is fully automated.

Post-Resolution Analytics and Feedback

LoopEvery denied and appealed claim feeds back into the ML model as training data.

The system continuously refines its denial probability scores, updates payer-specific rule libraries as payer policies change, and surfaces root-cause patterns to leadership e.g., "Payer X denied 34% of cardiac catheterization claims this quarter citing lack of medical necessity; 27 of those appeals were overturned; here's the documentation pattern that won."

This closes the loop and prevents the same denial from recurring at scale.

Case Studies

$2.5 Million in Labor Redirected Through RPA

Corewell Health (Grand Rapids, Michigan) deployed an RPA platform spanning authorization, registration, credentialing, and billing workflows.

In 2023, the system generated $2.5 million in savings through redirected labor staff hours that previously went to rote data entry and portal-checking are now spent on complex clinical denials that require human judgment.

The next phase of Corewell's roadmap includes layering generative AI on top of the RPA foundation for predictive denial prevention and proactive appeal drafting.

SVP of Revenue Cycle Amy Assenmacher's approach: be transparent with payers about the automation being deployed, using it as a basis for collaborative dialogue rather than an adversarial tool.

15–20% Work Queue Reduction with RPA + ML

Luminis Health (Annapolis, Maryland) implemented robotic process automation combined with machine learning specifically targeting work queue management the digital pile of unresolved claims that clogs billing departments.

The RPA bots respond to information requests from both payers and internal teams, dramatically reducing the manual intervention required to keep queues from growing.

The result was a 15–20% reduction in specific work queue categories.

The insight from Luminis:

''Bots don't need to handle everything even removing the most repetitive 20% of tasks from human queues has a measurable throughput impact on the remaining 80%.''

22% Drop in Prior Authorization Denials

Fresno Community Health Network implemented an RPA solution specifically targeting the prior authorization workflow , the step most responsible for both clinical delays and downstream denials.

The system automated PA request preparation, submission, and tracking against the payer's portal.

The result was a 22% decrease in prior authorization denials.

For context: Authorization denials were running at 24% across the industry in 2023. A 22% reduction in that specific category is not a marginal improvement it's a structural fix.

Why Healthcare Apps Should Use Denial Automation

Beyond the case studies, here are the concrete, financially grounded reasons healthcare software applications have to care about this technology.

Payers Are Using AI to Deny Claims at Scale

Insurance companies ,particularly Medicare Advantage plans, which saw a 59% spike in denials in 2024 have invested heavily in automated claim review systems that are purpose-built to find technical grounds for rejection.

Denials triggered by requests for information (RFIs) alone increased 9% from 2022 to 2024.

A hospital relying on human billing staff to manually respond to each of these is bringing a fax machine to a machine learning fight.

As KPMG's Ashraf Shehata has noted, health insurers began investing in aggressive AI denial technology years before providers.

Today, approximately 45% of U.S. healthcare entities have incorporated AI in revenue cycle operations , but only 14% are using AI specifically to reduce denials, per Experian's 2025 State of Claims survey.

Hospitals Cannot Verify the Validity of Payer Denials Without Data

Here's a practical problem that hospital CFOs don't always frame clearly: how does a hospital know if a payer's denial is legitimate?

If UnitedHealthcare denies 500 claims this month citing "lack of medical necessity," is that 500 correct decisions, 500 automated false positives, or somewhere in between?

Without a system that tracks payer-specific denial patterns at the procedure and DRG level and cross-references them against the actual clinical documentation which is exactly what a denial automation platform does the hospital has no data-driven basis to push back. The payer's word is effectively final. f

Mayo Clinic's payer scorecards exist precisely to generate that leverage:

''Here are 47 cardiac procedures you denied last quarter where 41 appeals were overturned ''

That number requires an automated system to produce at scale.

Appeal Deadline Compliance

Every denied claim has a payer-specific appeal window typically 30 to 180 days, varying by payer type and state regulation.

Missing that window means the denial becomes permanent, regardless of its clinical merit. In a manual billing environment, appeal deadline tracking is done via spreadsheets, calendar reminders, and human memory.

RPA bots with embedded deadline logic eliminate this entirely, the appeal window is calculated at denial receipt, the task is auto-assigned with a due date, and an escalation fires if it hasn't been acted on in time.

Prior Authorization Denials are Particularly Recoverable

Authorization-related denials ran at a 24% rate industry-wide in 2023.

A significant portion of these are issued for procedures that were actually authorized, the authorization was obtained but not correctly attached to the claim, or the wrong authorization number was referenced, or the authorization expired by 12 hours between the time it was obtained and the service was rendered.

RPA tools that auto-populate prior auth details from payer portals directly into the claim form eliminate this class of error entirely.

The 22% reduction in prior auth denials at Fresno Community Health Network is the direct product of fixing this specific mechanical failure through automation.

The "Deny First, Pay Later" Model Is Getting Worse

The payment timelines on outstanding claims are deteriorating. Care New England's VP of Revenue Cycle, Krysten Blanchette, noted that payer initial decision windows have stretched from 14–30 days to 14–60 days in recent years.

For an extra 30 days of float per claim, a hospital with $100M in annual A/R could be carrying $8–10M in additional financing costs, depending on interest environment.

Automated real-time claim status monitoring and proactive follow-up bots compress those timelines by ensuring no claim ever sits untracked for more than a configured number of days.

Should you Build a Denial Automation Application from Scratch?

This is the question every RCM leader eventually reaches. It depends on what you already have, how much payer-specific customization you need, and whether your data is clean enough to train a model on.

Let's work through it systematically.

Understand Your Existing Technology Stack

Most U.S. hospital apps already run either Epic or Oracle Health (Cerner) as their EHR backbone.

As of 2024, Epic holds approximately 42% of the acute care EHR market and captured 70% of new hospital EHR decisions in 2024.

Oracle Health (Cerner) holds roughly 21.7%. Both platforms now have native or deeply integrated denial management and RCM automation capabilities and understanding what's already available in your existing system is the first and most important step before evaluating any build-or-buy decision.

What Epic and Oracle Health Already Offer Natively

Epic

- Integrates revenue and coding automation directly into clinical workflows via its RCM module.

- The Epic App Orchard (now "Epic Showroom") marketplace has certified third-party denial management tools that connect via FHIR APIs, including tools like Notable for front-end access and RCM automations.

- Epic's AI roadmap (previewed at UGM 2025) includes native agentic AI for revenue cycle functions. Epic uses HL7 FHIR R4, SMART on FHIR, and Bulk FHIR for analytics pipelines.

- External RPA tools connect through Web Services, SOAP, RESTful APIs, HL7, and FHIR.

Oracle Health (Cerner)

- More open API approach via its Cerner Millennium platform, allowing broader third-party integration. RevWorks offers RCM automation natively.

- Oracle's Clinical AI Agent (announced late 2024) targets workflow automation.

- Cerner's more modular architecture means third-party denial management tools can typically be integrated with less proprietary friction than Epic, at the cost of less unified data architecture.

The right answer for most healthcare entities to integrate denial automation falls into one of three buckets.

Denial Automation Application Architecture

Most hospitals will integrate an external denial management tool with their existing EHR rather than building from scratch.

Here's what a production-grade integration architecture actually requires, in sequence:

Historical Data Extraction and Cleaning

Before any ML model or automation bot can work, you need clean, structured historical claims data.

This is the step most technology vendors gloss over and where most implementations fail.

- Extract 24–36 months of 837 (claim submission) and 835 (remittance/ERA) transaction data from your clearinghouse or EHR.

- Map denial CARC/RARC codes to standardized denial reason taxonomy.

- Join claim data with EHR clinical data (CPT codes, DRGs, attending physician, facility, payer ID, service date).

- Identify and exclude duplicate claims, testing claims, and outlier DOS periods (COVID disruption years, for example, distort patterns).

- Normalize payer IDs , the same insurer often appears under 50+ different payer IDs across legacy systems.

Expect 6–12 weeks of data engineering work before a single model can be trained. Many hospitals have 3–5 years of claims data sitting in a clearinghouse or data warehouse that has never been systematically analyzed. That data is the foundation of everything downstream.

EHR Integration Layer

The denial automation system needs to read from and write to your EHR in real time. The integration method depends on your EHR:

- Epic: Use SMART on FHIR for real-time clinical data access; Bulk FHIR for analytics/ML data pipelines; Epic App Orchard certification for marketplace tools; HL7 v2 interfaces for legacy RCM data where FHIR surface area doesn't cover the use case.

- Oracle Health (Cerner): Cerner's more open API allows broader third-party connections; use HL7 FHIR R4 endpoints; confirm Bulk Data enablement per your specific tenant configuration.

- For both: Use a healthcare integration engine (Mirth Connect, Rhapsody, or cloud-native options like Azure Health Data Services) to handle HL7-to-FHIR transformation and bidirectional message routing.

Critical: Do not allow the denial automation system to write back to the EHR without a defined data governance policy and audit trail. Every automated status update, appeal note, and work queue assignment needs to be logged with timestamp, actor (bot ID), and version.

RPA Bot Configuration

Deploy RPA bots (UiPath, Automation Anywhere, or Blue Prism are the three dominant healthcare-grade platforms) for the high-volume transactional workflows. Each bot requires:

- A defined trigger (new appointment scheduled → eligibility check; ERA received → denial categorization)

- Payer portal credentials managed through a secure vault (not hardcoded)

- Exception handling logic for portal downtime, CAPTCHA, session timeouts

- A human escalation path for any case the bot cannot resolve within defined parameters

- Regular bot audits one documented failure mode is "shadow bots" built by end users without IT oversight, which create compliance and audit risk. Maintain a complete bot inventory.

Predictive ML Model for Denial Scoring

If you're building a custom predictive layer (rather than relying on a vendor's pre-trained model), the model training process requires:

- Feature engineering: Payer ID, CPT/ICD-10 code pair, DRG, place of service, attending physician NPI, time since last related claim, prior auth status, patient age bracket, coverage type, days to service from auth date.

- Target variable: Binary denial (1) vs. paid (0) on first submission.

- Model type: Gradient boosting (XGBoost or LightGBM) typically outperforms logistic regression for this use case given the categorical feature mix; evaluate against your specific data.

- Validation: Stratified by payer to avoid a single large payer dominating the signal; validate on out-of-time data (most recent 6 months withheld from training).

- Threshold calibration: Set the probability threshold based on the cost of a false positive (unnecessary human review) vs. a false negative (denied claim missed) for your specific volume and staffing.

- Drift monitoring: Payer policy changes will shift denial patterns quarterly; the model needs monthly retraining or at minimum monthly drift detection.

NLP Appeal Engine

For automated appeal drafting, you need an NLP/LLM layer that:

- Reads the denial reason from the ERA (structured) and any accompanying payer correspondence (unstructured).

- Retrieves the relevant clinical notes from the EHR for the DOS (FHIR DocumentReference).

- Cross-references the payer's current published medical policy (requires maintaining a payer policy document library, updated regularly this is the maintenance overhead that vendors handle and internal builds must manage).

- Generates a draft appeal in the payer's required format (some payers have proprietary appeal templates; store these in a template library keyed to payer ID).

- Routes the draft to a human reviewer with suggested clinical evidence highlighted.

If using a commercial LLM (OpenAI, Anthropic Claude API, etc.) for the generation step, ensure all PHI is handled through a BAA-covered deployment and that no patient data leaves your HIPAA boundary.

Alternatively, use a locally deployed or private-cloud model.

The generation step itself can be prompt-engineered effectively without fine-tuning the clinical context and payer policy documents are passed as context at inference time.

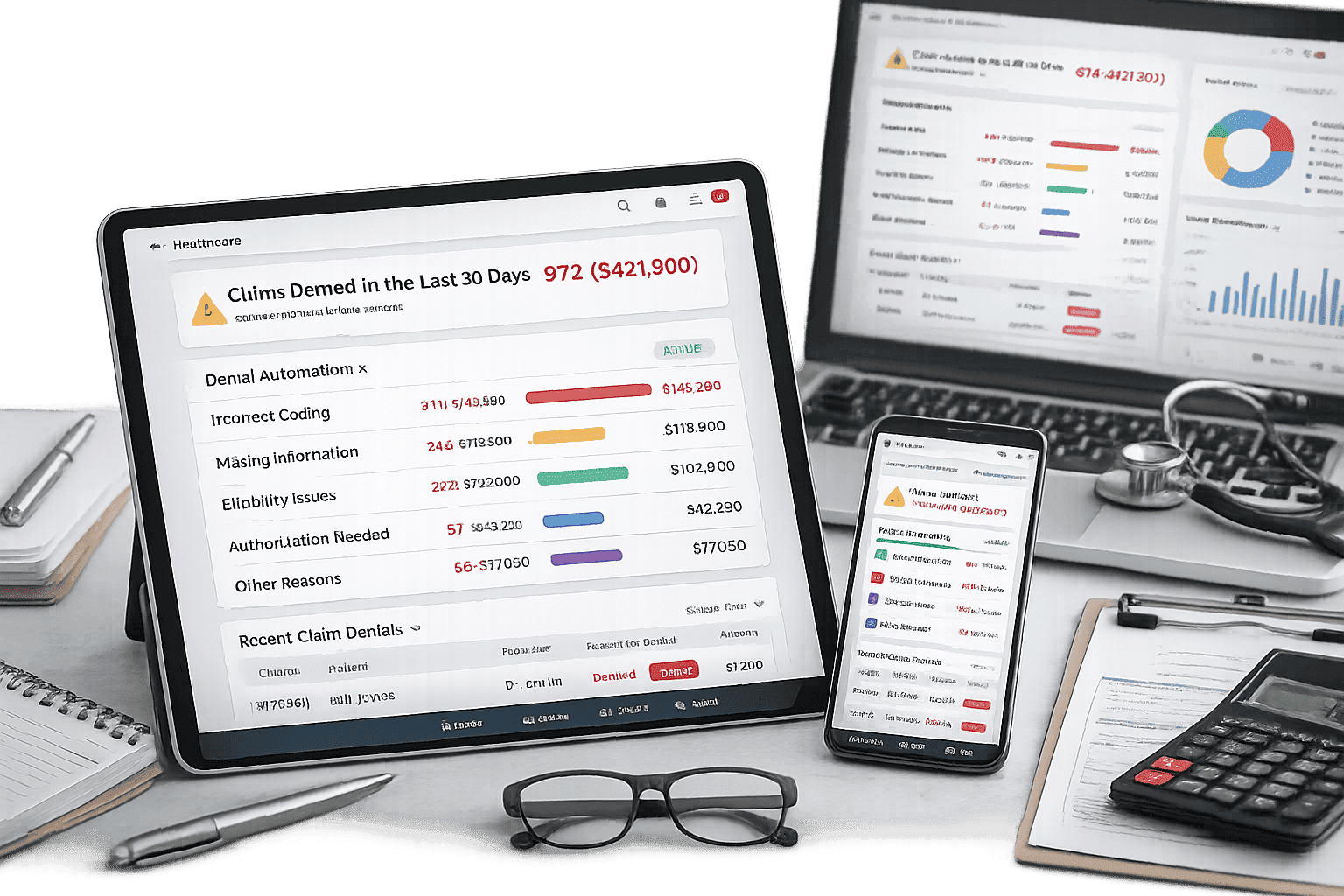

Analytics Dashboard and Feedback Loop

The value of denial automation compounds over time only if the system surfaces actionable intelligence.

The analytics layer should produce:

- Denial rate by payer, procedure, facility, and physician , updated daily.

- Appeal success rate by denial reason code and payer.

- Average days to resolution by payer (feeding the payer scorecard).

- Revenue at risk: Total value of open denials segmented by appeal deadline proximity.

- Root cause trending: Which upstream failure points (missing auth, coding error, eligibility error, documentation gap) are generating the most denial dollars this month vs. last quarter.

This dashboard feeds back into the ML model as new training data and into operational workflows as priority signals.

It also becomes the basis for payer negotiation conversations, the quantitative evidence that specific payers are exhibiting denial patterns inconsistent with the contracted clinical criteria.

Honest Assessment: Build vs. Integrate vs. Managed Service

Do not build from scratch unless you have a dedicated data engineering team, clean historical claims data, and clear contract requirements that off-the-shelf tools cannot meet.

Where custom build adds genuine value is in the ML scoring layer ,the component that predicts denial probability for your specific payer mix, contract terms, and clinical documentation patterns.

Off-the-shelf models are trained on industry-average patterns. Your payer mix is not industry average.

A large academic medical center in a two-payer market with specific Blue Cross contract terms will have denial patterns that look nothing like a community hospital in a highly competitive commercial market.

That specificity justifies a custom model sitting on top of a vendor's transactional automation infrastructure.

The emerging best practice and the architecture that Mayo Clinic, Corewell, and the leading academic systems are converging on is:

Use an RPA platform (UiPath, Automation Anywhere) for transactional automation; use a certified EHR-integrated denial management tool for workflow orchestration and payer connectivity; and build or fine-tune a custom ML model for denial prediction that's trained on your specific historical data. The only caveat is that these tools operate on a defined cost structure, with expenses increasing as you continue to scale your denial claims feature.

Summing Up

The market for denial management automation will reach $8.93 billion by 2030. The hospitals that will capture the recovery value are not necessarily the ones with the biggest technology budgets ,they're the ones that started with clean data, integrated incrementally, and treated denial analytics as a strategic intelligence function rather than a back-office cleanup task.

The payers already know this. The question is whether the providers catch up.